| Figure 1: Correspondence-guided flight. |

Judgment and Decision Making, vol. 4, no. 2, March 2009, pp. 154-163

Searching for coherence in a correspondence worldKathleen L. Mosier* |

In this paper, I trace the evolution of the aircraft cockpit as an example of the transformation of a probabilistic environment into an ecological hybrid, that is, an environment characterized by both probabilistic and deterministic features and elements. In the hybrid ecology, the relationships among correspondence and coherence strategies and goals and cognitive tactics on the continuum from intuition to analysis become critically important. Intuitive tactics used to achieve correspondence in the physical world do not work in the electronic world. Rather, I make the case that judgment and decision making in a hybrid ecology requires coherence as the primary strategy to achieve correspondence, and that this process requires a shift in tactics from intuition toward analysis.

Keywords: coherence, correspondence, decision making, aircraft, cockpit.

We live in a correspondence-driven world, in which the actual state of the world imposes constraints on interactions within it (e.g., Vicente, 1990). In correspondence-driven domains, judgments — how far away and how high is that obstacle? what is the correct diagnosis given these symptoms? where is the enemy force likely to attack? — are guided by multiple probabilistic and fallible indicators in the physical world. Survival depends on the correspondence or accuracy of our judgment, that is, how well it corresponds to objective reality. The increasing availability of sophisticated technological systems, however, has profoundly altered the character of many correspondence-driven environments, such as aviation, medicine, military operations, or nuclear power, as well as the processes of judgments and decision making within them.

In this paper, I trace the evolution of the aircraft cockpit as an example of the transformation of a probabilistic environment into an ecological hybrid, that is, an environment characterized by both probabilistic and deterministic features and elements. As technology has changed the nature of cues and information available to the pilot, it has also changed the strategies and tactics pilots must use to make judgments successfully. I make the case that judgment and decision making in a hybrid ecology requires coherence as the primary strategy to achieve correspondence, and that this process requires a shift in tactics from intuition toward analysis. The recognition of these changes carries implications for research models in high-technology environments, as well as for the design of systems and decision aids. Although the examples here come from the aviation domain, the argument that coherence can serve as a strategy to achieve correspondence is applicable to any high-tech ecology that functions within and is constrained by the physical world.

The terms coherence and correspondence refer to both goals of cognition and strategies used to achieve these goals. The goal of correspondence is empirical, objective accuracy in human judgment. A correspondence strategy entails the use of multiple fallible indicators to make judgments about the natural world. A pilot, for example, uses a correspondence strategy when checking cues outside the cockpit to figure out where he or she is, or judging height and distance from an obstacle or a runway. Correspondence competence refers to an individual’s ability to correctly judge and respond to multiple fallible indicators in the environment (e.g., Brunswik, 1956; Hammond, 1996; 2000; 2007), and the empirical accuracy of these judgments is the standard by which correspondence is evaluated.

The goal of coherence, in contrast, is rationality and consistency in judgment and decision making. Using a coherence strategy, a pilot might evaluate the information displayed inside the cockpit to ensure that system parameters, flight modes, and navigational displays are consistent with each other and with what should be present in a given situation. Coherence competence refers to the ability to maintain logical consistency in judgments and decisions. Coherence is not evaluated by empirical accuracy relative to the real world; what is important is the logical consistency of the process and resultant judgment (Hammond, 1996; 2000; 2007).

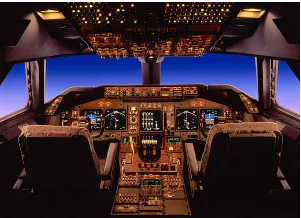

Figure 1: Correspondence-guided flight.

Cognitive tactics ranging from intuition to analysis may be used for correspondence or coherence strategies and goals. Analysis refers to a "step-by-step, conscious, logically defensible process” with a high degree of cognitive control, whereas intuition typically describes “the opposite — a cognitive process that somehow produces an answer, solution, or idea without the use of a conscious, logically defensible, step-by-step process” (Hammond, 1996, p. 60).

The notion of a cognitive continuum from intuition to analysis has been introduced and developed by Hammond (e.g., 1996; 2000). According to his cognitive continuum theory, intuition and analysis represent the endpoints on a continuum of cognitive activity. Judgments vary in the extent to which they are based on intuitive or analytical processes, or some combination of both. At the approximate center, for example, is quasi rationality, sometimes referred to as common sense, which involves components of intuition as well as analysis. During the judgment process, individuals may move along this continuum, oscillating between intuition and analysis as they make judgments. Pilots, for example, may use intuition when gauging weather from clouds ahead, move toward analysis to read and interpret printed weather data, and rely on common sense to judge the safest path, or decide whether to continue on or turn back.

It should be noted that although tactics described by any point on the continuum may be used for either correspondence or coherence strategies and goals, characteristics of the judgment task and of the decision maker will influence the choice of tactic as well as its probable success. Cues in the physical world such as size, color, smell, etc., may be amenable to judgment via intuitive tactics; cues in the electronic world such as digital displays, mode dependent data, or layered information require tactics that are more toward the analytical end of the continuum. Additionally, the decision maker’s level of experience and expertise may influence the choice of tactics. In aviation, for example, novice pilots will move toward analysis for correspondence by using a combination of computations and cues outside of the aircraft to figure out when to start a descent for landing. Experienced pilots in contrast may look outside the cockpit window and intuitively recognize when the situation “looks right” to start down.

The field of aviation has traditionally been correspondence-driven in that the flying task involved integrating probabilistic cues (multiple fallible indicators; e.g., Brunswik, 1956; Hammond, 1996; Wickens & Flach, 1988), and correspondence was both the strategy and the goal for judgment and decision making. The cognitive processes utilized were often intuitive ones, enabling rapid data processing and quick judgments, with recognition and pattern matching as the tactics of choice for experienced pilots. Additionally, piloting an aircraft used to be a very physical task. First generation aircraft were highly unstable, and demanded constant physical control inputs (Billings, 1996). The flight control task was a “stick and rudder” process involving knowing the characteristics of the aircraft and sensing the information necessary for control (Baron, 1988). Figure 1 illustrates flying in its most primitive state. Note that the hang glider pilot must rely solely on correspondence competence to navigate and control the craft, as well as to judge speed, altitude, and distance to and from specific locations.

In the early days of aviation, correspondence was achieved primarily through the senses via visual and kinesthetic perception of the natural, physical world. For pilots, the emphasis was on accurate judgment of objects in the environment — height of obstacles in terrain, distance from ground, severity of storm activity in and around clouds, location of landmarks — and accurate response to them (e.g., using the controls to maneuver around obstacles or storm clouds, or to make precise landings). Features of the environment and of the cues utilized impacted the accuracy of judgments. For example, cues that are concrete and/or can be perceived clearly facilitate accurate judgments. A pilot had a relatively easy time judging a 5-mile reporting point when it was marked by a distinctive building. Cues that are murkier, either because they are not as concrete in nature or because they are obscured by factors in the environment, hinder accurate judgments. The same pilot had a much harder time judging the report point at night, or when the distinctive building was hidden by fog or clouds.

Figure 2: Coherence-guided flight: The Boeing 777.

Because of this, early pilots avoided situations that would put the accuracy of their senses in jeopardy, such as clouds or unbroken darkness. For example, early airmail pilots often relied on railroad tracks to guide their way, and mail planes had one of the landing lights slanted downward to make it easier to follow the railroad at night (Orlady & Orlady, 1999). As pilots gained experience, their correspondence competence increased and more accurate judgment of probabilistic features in the environment resulted in more accurate response.

As aircraft evolved, flying became physically easier as parts of control task were automated (e.g., through use of an autopilot), and automated systems began to perform many of the flight tasks previously accomplished by the pilot. The demand for all-weather flight capabilities resulted in the development of instruments that would supposedly compensate for any conditions that threatened to erode pilots’ perceptual accuracy. Conflicts between visual and vestibular cues when the ground was not visible, for example, could lead to spatial disorientation and erroneous control inputs — but an artificial horizon, or attitude indicator, within the cockpit, could resolve these conflicts. These were first steps in the transformation of the cockpit ecology. Soon, more and more information placed inside the aircraft supplemented or replaced cues outside the aircraft (e.g., altitude indicator, airspeed indicator, alert and warning systems) and vastly decreased reliance on perceptual (i.e., probabilistic) cues. For example, increasingly reliable instruments eliminated the need for out-the-window perception, allowing aircraft to operate in low or no visibility conditions.

Today, technological aids have reduced the ambiguity inherent in probabilistic cues. They process data from the outside environment, and display them as highly reliable and accurate information rather than probabilistic cues. Exact location in space can be read from cockpit displays, whether or not cues are visible outside of the cockpit. The location and severity of storm activity in and around clouds are displayed on color radar. Today’s glass cockpit aircraft can fly from A to B in low (or no) visibility conditions — and once initial coordinates are programmed into the flight computer, navigation can be accomplished without any external reference cues. In fact, in adverse weather conditions, cues and information outside of the aircraft may not be accessible. In contrast to earlier aviators, modern pilots can spend relatively little of their time looking out the window or manipulating flight controls, and most to all of it focused on integrating, updating, and utilizing information inside the cockpit. The advances in and proliferation of technological aids to control the aircraft, provide information, and ultimately to manage the environment are illustrated in Table 1. Contrast the decision ecology in Figure 1 with that in Figure 2, the Boeing 777, one of today’s most highly automated commercial aircraft.

Table 1: Evolution of technology in Civil Air Transport. Adapted from Fadden (1990) and Billings (1996).

Lockheed 14

DeHavilland Comet

Boeing 707

Douglas DC-8

Douglas DC-9

Yaw damper

Boeing 727,

Boeing 737–100,200

Boeing 747–100–300

DC-10, L-1011

Airbus A-300

Autoland systems

Integrated flight systems

VHF navigation

Configuration warning systems

Malfunction alerts

Boeing 767/757, 747–400

McDonnell-Douglas MD-80

Airbus A-310, 300–600

Fokker F-28–100

MD-11 (transition to 4th Gen)

Primary flight display

Navigation displays (moving map)

Multifunction displays

Weather radar

System/sub-system status displays

Collision avoidance system (TCAS)

Integrated alerting systems

Airbus A-319/320/321

Airbus A-330, 340

Boeing 777,787

Integrated systems operation

Primary flight display

Navigation displays (moving map)

Multifunction displays

Weather radar

System/sub-system status displays

Collision avoidance system (TCAS)

Integrated alerting systems

Windshear displays

Datalink displays

Electronic checklist

High-precision in-trail guidance in terminal areas

Automated collision avoidance maneuvers

Automated wind shear avoidance maneuvers

Satellite navigation

Digital data link communication

“Big picture” integrated displays

Enhanced head-up displays

Enhanced or synthetic vision systems

Direct FMS-ATC computer communication

Error monitoring and trapping

Improved electronic checklist

The physical and perceptual demands of the flying task have been greatly reduced by technology; however, new cognitive requirements have replaced them. The environment in the modern aircraft cockpit (as in other high-technological environments) comprises a complex hybrid ecology — it is deterministic in that much of the uncertainty has been engineered out through technical reliability, but it is probabilistic in that conditions of the physical and social world — including ill-structured problems, ambiguous cues, time pressure, and rapid changes — interact with and complement conditions in the electronic world. In a hybrid ecology, cues and information may originate in either the internal, electronic deterministic systems or in the external, physical environment. Input from both sides of the ecology must be integrated for judgment and decision making. Goals, strategies, and tactics in the hybrid ecology are different from those used in the natural world. In particular, characteristics of the electronic side of the ecology do not lend themselves to tactics on the intuitive end of the cognitive continuum.

Most of the data that modern pilots use to fly and to make decisions can be found on cockpit display panels and CRTs, and are qualitatively different from the cues used in the naturalistic world. They are precise, reliable indicators of whatever they are designed to represent, rather than probabilistic cues. As illustrated in Table 1, each generation of aircraft has added to the store of information pilots can access via automated displays within the cockpit. Essentially, probabilism has been engineered out of the cockpit through high system reliability. Technology brings proximal cues into exact alignment with the distal environment, and the “ecological validity” of the glass cockpit, or the “probabilistic mapping between the environment and the medium of perception and cue utilization” (Flach & Bennett, 1996, p. 74), approaches 1.0. Because technological aids have high internal reliability, the ecological validity of the information they present approaches 100% - if and when complete consistency and coherence among relevant indicators exist.

System opaqueness is another facet of electronic systems in the automated cockpit that has often been documented and discussed (e.g., Woods, 1996; Sarter et al., 1997). High-tech cockpits contain a complex array of instruments and displays in which information and data are layered and vary according to display mode and flight configuration, hampering the pilot’s ability to track system functioning and to determine consistency among indicators. What the pilot often sees is an apparently simple display that masks a highly complex combination of features, options, functions, and system couplings that may produce unanticipated, quickly propagating effects if not analyzed and taken into account (Woods, 1996).

Because the hybrid ecology functions in and is subject to the constraints of the physical world, correspondence is still the ultimate goal of judgment and decision making. However, because the hybrid ecology is characterized by highly reliable deterministic systems, strategies and tactics to achieve correspondence will be different than those in a probabilistic ecology.

Understanding the relationship between correspondence and coherence in hybrid ecologies is critical to defining their role in judgment and decision making. Most importantly, the primary route to correspondence in the physical world is through the achievement of coherence in the electronic world. Coherence is a strategy, in that pilots are required to think logically and rationally about data and information in order to establish and maintain consistency among indicators; and coherence is a goal, in that correspondence simply cannot be accomplished without the achievement of coherence. Coherence is a means to and a surrogate for correspondence, and the accuracy of judgment is ensured only as long as coherence is maintained. This involves knowing what data are relevant and integrating all relevant data. Coherence competence entails creating and identifying a rationally “good” picture — engine and other system parameters should be in sync with flight mode and navigational status — and making decisions that are consistent with what is displayed. Coherence competence also entails knowledge of potential pitfalls that are artifacts of technology, such as mode errors, hidden data, or non-coupled systems and indicators, recognition of inconsistencies in data that signal lack of coherence, and an understanding of the limits of deterministic systems as well as their strengths and inadequacies.

If coherence is present in the electronic cockpit, the pilot should be able to trust the empirical accuracy of the data used to attain correspondence; that is, the achievement of coherence also accomplishes correspondence. When programming a flight plan or landing in poor weather, for example, the pilot must be able to assume that the aircraft will fly what is programmed and that the instruments are accurately reflecting altitude and course. When monitoring system functioning or making an action decision, the pilot must be confident that the parameters displayed on the instrument panel are accurate. The pilot may not be able to verify this because he or she does not always have access to either correspondence cues or to objective reality.

Many if not most judgment errors in electronic cockpits stem from coherence errors — that is, failures to detect something in the electronic “story” that is not consistent with the rest of the picture. Coherence errors have real consequences in the physical world because they are directly linked to correspondence goals. Parasuraman and Riley (1997), for example, cite controlled flight into terrain accidents in which crews failed to notice that their guidance mode was inconsistent with other descent settings, and flew the aircraft into the ground. “Automation surprises,” or situations in which crews are surprised by control actions taken by automated systems (Sarter, Woods, & Billings, 1997), occur when pilots have an inaccurate judgment of coherence — they misinterpret or misassess data on system states and functioning (Woods & Sarter, 2000). Mode error, or confusion about the active system mode (e.g., Sarter & Woods, 1994; Woods & Sarter, 2000), is a type of coherence error that has resulted in several incidents and accidents. Perhaps the most well-known of these occurred in Strasbourg, France (Ministre de l’Equipement, des Transports et du Tourisme, 1993), when an Airbus-320 confused approach modes:

It is believed that the pilots intended to make an automatic approach using a flight path angle of –3.3° from the final approach fix…The pilots, however, appear to have executed the approach in heading/vertical speed mode instead of track/flight path angle mode. The Flight Control Unit setting of “–33” yields a vertical descent rate of –3300 ft/min in this mode, and this is almost precisely the rate of descent the airplane realized until it crashed into mountainous terrain several miles short of the airport. (Billings, 1996, p. 178).

Clearly, the cognitive processes required in high-tech aircraft are quite different than those needed in early days of flying. The electronic side of the environment creates a discrete rather than a continuous space (Degani, Shafto, & Kirlik, 2006), and as such requires more formal cognitive processes than does the continuous ecology of the naturalistic world. Managing the hybrid ecology of a high-tech aircraft is a primarily coherence-based, complex, cognitively demanding mental task, and demands an accompanying change in judgment processes. Coherence in judgment involves data, rationality, logic, and requires the pilot to move toward the analytical mode of judgment on the cognitive continuum; that is, to shift from intuitive tactics geared toward correspondence in the direction of analytical tactics geared toward coherence.

Analysis in the judgment process necessarily comes into play whenever decision makers have to deal with numbers, text, modes, or translations of cues into information (e.g., via an instrument or computer). In the modern aircraft, pilots need to examine electronic data with an analytical eye, decipher their meaning in the context of flight modes or phases, and interpret their implications in terms of outcomes. Failure to do so will result in inadequate or incorrect situation assessment and potential disaster. Glass cockpit pilots use analytical tactics when they discern and set correct flight modes, compare display data with expected data, investigate sources of discrepancies, program and operate flight computer systems, or evaluate what a given piece of data means when shown in a particular color, in a particular position on the screen, in a particular configuration, in a particular system mode.

The movement toward analysis for coherence affords potential gains as well as potential risks. Analysis in the electronic milieu, as in other arenas, can produce judgments that are much more precise than intuitive judgments. However, the analytical process is also much more fragile than intuitive processes — a single small error can be fatal to the process, and one small detail can destroy coherence and result in disastrous correspondence errors. In contrast to the normal distribution of errors from intuitive judgments, distributions of the errors from analysis tend to be peaked, with frequent accuracy but occasional high inaccuracy (Dunwoody, Haarbauer, Mahan, Marino, & Tang, 2000; Hammond, Hamm, Grassia, & Pearson, 1987). Mode confusion, as described in the A-320 accident above, often results from what looks, without sufficient analysis, like a coherent picture. The cockpit setup for a flight path angle of –3.3° in one mode looks very much like the setup for a –3300 ft/min approach in another mode.

Moreover, the search for coherence is hampered because system data needed for analysis and judgment must be located before they can be processed. This is often not an easy process because in many cases the data that would allow for analytical assessment of a situation may be obscured, not presented at all, or buried below surface features. Technological decision-aiding systems, in particular, often present only what has been deemed “necessary.” Data are pre-processed, and presented in a format that allows, for the most part, only a surface view of system functioning, and precludes analysis of the consistency or coherence of data. In their efforts to provide an easy-to-use intuitive display format, designers have often buried the data needed to retrace or follow system actions. Calculations and resultant actions often occur without the awareness of the human operator. The proliferation of characteristics such as these indicates that achieving coherence in the hybrid ecology is no easy task.

If correspondence in judgment and decision making in the hybrid ecology is accomplished primarily through the achievement of coherence, then it is important to examine whether the features and properties of the environment elicit the type of cognition required. Performance will depend in part on the degree to which task properties elicit the most effective cognitive response (e.g., Hammond et al., 1987). Technology in hybrid environments should support tactics on the analytical end of the cognitive continuum because those are the tactics required for the achievement of coherence, but often does not do so effectively. In aircraft as in many hybrid ecologies, a discrepancy exists between the type of cognition required and what is fostered by current systems and displays.

System designers in hybrid environments have often concentrated on “enhancing” the judgment environments by providing decision aids and interventions designed to make judgment more accurate, and in doing so have made assumptions about what individuals need to know and how this information should be displayed. From the beginning of complex aircraft instrumentation, for example, the trend has been to present data in pictorial formats whenever possible, a design feature that has been demonstrated to induce intuitive cognition (e.g., Dunwoody et al., 2000; Hammond et al., 1987). The attitude indicator presents a picture of wings rather than a number indicating degrees of roll. Collision alert and avoidance warnings involve shapes that change color, and control inputs that will take the aircraft from the red zone to the green zone on the altimeter. The holistic and pictorial design of many high-tech cockpit system displays allows for quick detection of some states that are outside of normal parameters. Woods (1996), however, described the deceptiveness of these designs as characterized by “apparent simplicity, real complexity.”

In their efforts to highlight and simplify electronic information, designers of technological aids in hybrid ecologies have inadvertently led the decision maker down a dangerous path by fostering the assumption that the data and systems they represent can be managed in an intuitive fashion. This is a false assumption — intuition is an ineffective tactic in an electronic world geared toward coherence. Within the seemingly “intuitive” displays reside numerical data that signify different commands or values in different modes, and these data must be processed analytically. Extending Woods’ description of electronic cockpits above, the cognitive processing demanded by them can be characterized as “apparent intuitiveness, real analysis.”

Currently, the mismatch between cognitive requirements of hybrid ecologies and the cognitive strategies afforded by systems and displays makes it extremely difficult to achieve and maintain coherence. On one hand, system displays and the opacity of system functioning foster intuition and discourage analysis; on the other hand, the complexity of deterministic systems makes them impossible to manage intuitively, and requires that the pilot move toward analytical cognitive processing. Recognition of this mismatch is the first step toward rectifying it.

Designing for the hybrid ecology demands an acknowledgement that it contains a coherence-based, deterministic component. This means that developers have to design systems that are not only reliable in terms of correspondence (empirical accuracy), but are also interpretable in terms of coherence. Principles of human-centered automation prescribe that the pilot must be actively involved, adequately informed, and able to monitor and predict the functioning of automated systems (Billings, 1996). To this list should be added the requirement that the design of systems technology and automated displays elicits the cognition appropriate for accomplishing judgment and decision making in the hybrid ecology. It is critical to design systems that will aid human metacognition — that is, help decision makers recognize when an intuitive response is inappropriate, and more analytical cognition is needed.

Recognition of coherence as a strategic goal and analysis as a requisite cognitive tactic in hybrid ecologies carries implications for research models, as well as for system design. In order to examine and predict human behavior in the hybrid decision ecology, it is important to determine how individual factors, cognitive requirements, and situational features as well as interactions among them can be modeled and assessed. Additionally, the characteristics defining good judgment and decision making in the hybrid ecology suggest new criteria for expertise. Research issues pertaining to these variables and interactions include:

Neither correspondence competence nor coherence competence alone is sufficient in these domains. Intuitive, recognitional correspondence strategies have to be supplemented with or replaced by careful scrutiny and attention to detail. The pilot searching for coherence must know how aircraft systems work, and must be able to describe the functional relations among the variables in a system (Hammond, 2000). Additionally, the pilot must be able to combine data and information from internal, electronic systems with cues in the external, physical environment. Low-tech cues such as smoke or sounds can often provide critical input to the diagnosis of high-tech system anomalies. Pilots must also recognize when contextual factors impose physical constraints on the accuracy of electronic prescriptions. It does no good, for example, to make an electronically “coherent” landing with all systems in sync if a truck is stalled in the middle of the runway.

An additional component of expertise in hybrid ecologies will be the ability to select the appropriate strategy for the situation at hand. Jacobson and Mosier (2004), for example, noted in pilots’ incident reports that different decision strategies were mentioned as a function of the context within which a decision event occurred. During traffic problems in good visibility conditions, for example, pilots tended to rely on intuitive correspondence strategies, reacting to patterns of probabilistic cues; for incidents involving equipment problems, however, pilots were more likely to use more analytical coherence decision strategies, checking and cross-checking indicators to formulate a diagnosis of the situation.

The impact of electronic failures (infrequent but not impossible) on judgment strategy must also be taken into account. When systems fail drastically and coherence is no longer achievable, correspondence competence may become critical. A dramatic illustration of this type of event occurred in 1989, when a United Airlines DC-10 lost all hydraulics, destroying system coherence and relegating the cockpit crew to intuitive correspondence to manage the aircraft (see Hammond, 2000, for a discussion).

Additionally, many situations may call for an alternation between coherence and correspondence strategies and several shifts along the cognitive continuum from intuition to quasi-rationality to analysis. While landing an aircraft, for example, a pilot may first examine cockpit instruments with an analytical eye, set up the appropriate mode and flight parameters to capture the glide slope, and then check cues outside of the window to see if everything “looks right.” In some cases, integration of information inside and outside of the cockpit may entail delegation of strategies — as in standard landing routine that calls for one pilot to keep his or head “out the window” while the other monitors electronic instruments.

Experts in hybrid ecologies must be aware of the dangers of relying too heavily on technology for problem detection. A byproduct of heavy reliance is automation bias, a flawed decision process characterized by the use of automated information as a heuristic replacement for vigilant information seeking and processing. This non-analytical, non-coherent strategy has been identified as a factor in professional pilot decision errors (e.g., Mosier, Skitka, Dunbar & McDonnell, 2001; Mosier, Skitka, Heers & Burdick, 1998).

Ironically, experience can work against a pilot in the electronic cockpit, in that it may induce a false coherence, or the tendency to see what he or she expects to see rather than what is there, as illustrated by the phantom memory phenomenon found in our part-task simulation data (Mosier et al., 1998; 2001). Pilots in these studies “remembered” the presence of several indicators that confirmed the existence of an engine fire, and justified their decision to shut down the engine based on this recollection. In fact, these indicators were not present. The phantom memory phenomenon illustrates “the strength of our intuitive predilection for the perception of coherence, even when it is not quite there” (Hammond, 2000, p. 106), and highlights the fact that pilots may not be aware of non-coherent states even when the evidence is in front of them. Mosier et al. (1998) found that pilots who reported a higher internalized sense of accountability for their interactions with automation verified correct automation functioning more often and committed fewer errors than other pilots. These results suggest that accountability is one factor that encourages more analytical tactics geared toward coherence. Other factors and interventions must be identified.

This is perhaps the most important of these research questions, and will be critical in the next generation of aircraft. Aircraft designers and manufacturers must address two critical questions: 1) How much information is needed? and 2) How should information be displayed? The key will be to facilitate the processing of all relevant information.

We have begun to explore the issue of information search and information use in the electronic cockpit. In a recent study we found that time pressure, a common factor in airline operations, had a strong negative effect on the coherence of diagnosis and decision making, and that the presence of contradictory information (non-coherence in indicators) heightened these negative effects. Overall, pilots responded to information conflicts by taking more time to come to a diagnosis, checking more information, and performing more double-checks of information. However, they were significantly less thorough in their information search when pressed to come to a diagnosis quickly than when under no time pressure. This meant that they tended to miss relevant information under time pressure, resulting in lower diagnosis accuracy. These results confirm both the need for coherence in judgment and diagnosis and the difficulty of maintaining it under time pressure (Mosier, Sethi, McCauley, Khoo, & Orasanu, 2007).

Coherence in the hybrid ecology is a necessary state for correspondence in the physical world; however, discovering the most effective means to facilitate this requires a shift in our own cognitive activity, as researchers, toward the models and research paradigms that will allow us to more accurately understand the factors impacting behavior in this milieu. Examining cognition within the coherence/correspondence framework offers the possibility of finding solutions across hybrid ecologies, resulting in a seamless integration of their coherence and correspondence components. By doing this, we will gain a much broader perspective of the issues and potential problems or hazards within the hybrid environment, and the ability to make informed predictions regarding the cognitive judgment processes guiding the behavior of decision makers.

Baron, S. (1988). Pilot control. In E. L. Wiener & D. C. Nagel (Eds.), Human factors in aviation. San Diego, CA: Academic Press. (pp. 347–386)

Billings, C. E. (1996). Human-centered aviation automation: Principles and guidelines. (NASA Technical Memorandum #110381) NASA Ames Research Center, Moffett Field, CA.

Brunswik, E. (1956). Perception and the representative design of psychological experiments. Berkeley, CA: University of California Press.

Degani, A., Shafto, M., & Kirlik, A. (2006). What makes vicarious functioning work? Exploring the geometry of human-technology interaction. In A. Kirlik (Ed.), Adaptive perspectives on human-technology interaction. New York, NY: Oxford University Press.

Dunwoody, P. T., Haarbauer, E., Mahan, R. P., Marino, C., & Tang, C. (2000). Cognitive adaptation and its consequences: a test of cognitive continuum theory. Journal of Behavioral Decision Making, 13, 35–54.

Fadden, D. (1990). Aircraft automation changes. In Abstracts of AIAA-NASA-FAA-HFS Symposium, Challenges in Aviation Human Factors: The National Plan. Washington, DC: American Institute of Aeronautics and Astronautics.

Flach, J. M., & Bennett, K. B. (1996). A theoretical framework for representational design. In R. Parasuraman, & M. Mouloua (Eds.), Automation and human performance: Theory and applications (pp. 65–87). New Jersey: Lawrence Erlbaum Associates.

Hammond, K. R. (1996). Human Judgment and Social Policy. New York: Oxford Press.

Hammond, K. R. (2000). Judgments under stress. New York: Oxford Press.

Hammond, K. R. (2007). Beyond rationality: The search for wisdom in a troubled time. New York: Oxford Press.

Hammond, K. R., Hamm, R. M., Grassia, J., & Pearson, T. (1987). Direct comparison of the efficacy of intuitive and analytical cognition in expert judgment. IEEE Transactions on Systems, Man, and Cybernetics, SMC-17, 753–770.

Jacobson, C., & Mosier, K. L. (2004). Coherence and correspondence decision making in aviation: A study of pilot incident reports. International Journal of Applied Aviation Studies, 4, 123–134.

Ministre de l’Equipement, des Transports et du Tourisme. (1993). Rapport de la Commission d’Enquete sur l’Accident survenu le 20 Janvier 1992 pres du Mont Saite Odile a l/Airbus A320 Immatricule F-GGED Exploite par lay Compagnie Air Inter. Paris: Author.

Mosier, K., Sethi, N, McCauley, S., Khoo, L., & Orasanu, J. (2007). What You Don’t Know CAN Hurt You: Factors Impacting Diagnosis in the Automated Cockpit. Human Factors, 49, 300–310.

Mosier, K. L., Skitka, L. J., Dunbar, M., & McDonnell, L. (2001). Air crews and automation bias: The advantages of teamwork? International Journal of Aviation Psychology, 11, 1–14.

Mosier, K. L., Skitka, L. J., Heers, S., & Burdick, M. D. (1998). Automation bias: Decision making and performance in high-tech cockpits. International Journal of Aviation Psychology, 8, 47–63.

Orlady, H. W., & Orlady, L. M. (1999). Human factors in multi-crew flight operations. Vermont: Ashgate Publishing Company.

Parasuraman, R., & Riley, V. (1997). Humans and automation: Use, misuse, disuse, abuse. Human Factors, 39, 230–253.

Sarter, N., & Woods, D. D. (1994). Pilot interaction with cockpit automation II: An experimental study of pilots’ model and awareness of the flight management system. International Journal of Aviation Psychology, 4, 1–28.

Sarter, N B., Woods, D. D., & Billings, C. (1997). Automation surprises. In G. Savendy (Ed.), Handbook of human factors/ergonomics (2nd ed., pp. 1926–1943). New York: Wiley.

Vicente, K. J. (1990). Coherence- and correspondence-driven work domains: Implications for systems design. Behavior and Information Technology, 9(6), 493–502.

Wickens, C.D., & Flach, J. M. (1988). Information processing. In E. L. Wiener & D. C. Nagel (Eds.), Human factors in aviation. San Diego, CA: Academic Press. (pp. 111–156)

Woods, D. D. (1996). Decomposing automation: Apparent simplicity, real complexity. In R. Parasuraman, & M. Mouloua (Eds.), Automation and human performance: Theory and applications. New Jersey: Lawrence Erlbaum Associates. (pp. 3–18)

Woods, D. D., & Sarter, N. B. (2000). Learning from automation surprises and “going sour” accidents. In N. B. Sarter & R. Amalberti (Eds.), Cognitive engineering in the aviation domain. Mahwah, NJ: Lawrence Erlbaum Associates. (pp. 327–353).

This document was translated from LATEX by HEVEA.