Judgment and Decision Making, Vol. 14, No. 6, November 2019, pp. 721-727

Wronging past rights: The sunk cost bias distorts moral judgment

Ethan A. Meyers*

Michał Białek#

Jonathan A. Fugelsang$

Derek J. Koehler$

Ori Friedman$

|

When people have invested resources into an endeavor, they typically

persist in it, even when it becomes obvious that it will fail. Here we

show this bias extends to people’s moral decision-making. Across two

preregistered experiments (N = 1592) we show that people are more

willing to proceed with a futile, immoral action when costs have been sunk

(Experiment 1A and 1B). Moreover, we show that sunk costs distort people’s

perception of morality by increasing how acceptable they find actions that

have received past investment (Experiment 2). We find these results in

contexts where continuing would lead to no obvious benefit and only

further harm. We also find initial evidence that the bias has a larger

impact on judgment in immoral compared to non-moral contexts. Our findings

illustrate a novel way that the past can affect moral judgment.

Implications for rational moral judgment and models of moral cognition are

discussed.

Keywords: sunk costs, morality, decision-making, judgment, open data, open

materials, preregistered

1 Introduction

Torture is widely morally condemned (UNCAT, 1984). Nonetheless, six days

after 9/11, the U.S. government allowed the CIA to use “enhanced interrogation

techniques” to prevent further terrorist attacks. This decision reflected

a utilitarian tendency to permit immoral actions when the potential

benefits are sufficient. Having decided so, the US invested “well over

$300 million in non-personnel costs” (SSCI, 2014) and moral resources

(e.g., reputation) into their interrogation program. As early as three

months after interrogations began, reports suggested that these techniques

were ineffective in preventing attacks, and yet the program persisted over

the next decade (Dedman, 2006; SSCI, 2014). As such, the government

persisted in immoral actions even when it became evident that this would

not secure benefits.

In this paper, we suggest that persistence in immoral, but likely futile

actions, may often reflect the sunk cost bias. This is the tendency for

decision-makers to persist in an endeavor simply because they have already

invested resources into it (Arkes & Ayton, 1999; Arkes & Blumer, 1985;

Feldman & Wong, 2018; Sweis et al., 2018). For example, imagine yourself

as the president of an aviation company. You have recently financed the

development of a stealth plane. Unfortunately, you learn that one of your

competitors has just begun to market a superior (more advanced and

cheaper) stealth plane. Would you continue investing to finish the

project? When people consider such decisions, they tend to feel inclined

to continue investing because they want to avoid waste (Arkes, 1996), to

justify their past decisions (Aronson, 1969), or to avoid harming their

social reputation (e.g., Kanodia, Bushman & Dickhaut, 1989). Returning

to enhanced interrogations, the government may have persisted in using

immoral techniques, simply because of the costs already sunk.

Investigating this possibility will be informative about how the past (in

the form of sunk costs) affects present moral judgments and moral

permissibility. We know that adults often consider the future when making

moral judgments. For example, the future is at stake in almost all

experiments on the permissibility of harming others to secure a greater

good (as this benefit lies in the future) (Cushman, Young & Hauser,

2006; Greene & Haidt, 2002). However, less is known about how

consideration of the past affects moral judgment. Work on moral licensing

shows that people who committed good actions in the recent past believe

they can now engage in less moral behavior (Blanken, van de Ven &

Zeelenberg, 2015; Monin & Miller, 2001; Mullen & Monin, 2016). Likewise,

work on escalation of commitment shows that an individual is more likely

to engage in unethical behavior when their course of action is failing

(Armstrong, Williams & Barrett, 2004; Street, Robertson &

Geiger, 1997). For example, participants who imagined they had been

recently hired to manage investments of a firm, were more likely to engage

in insider trading when returns on past investments were poor (Street &

Street, 2006).

We suggest that sunk costs are another way in which the past affects moral

judgments. We see two ways this could happen. First, sunk costs could

increase people’s willingness to persist in immoral actions, and second,

they could make these actions seem less immoral. For example, having

committed moral misdeeds to help secure a positive goal, people could

remain willing to engage in these misdeeds and view them as more

acceptable, even after the actions are known to be futile. We might expect

this if the mechanisms that normally underlie the sunk cost bias operate

the same in situations involving immoral actions (e.g., enhanced

interrogation). This possibility is broadly consistent with claims of a

domain-general model of moral cognition where people are similarly

sensitive to inputs (e.g., framing effects, omission bias) in both moral

and non-moral contexts (Cushman & Young, 2011; Rai & Holyoak, 2010; for

a review see Osman & Wiegmann, 2017).

Alternatively, the sunk cost bias might not affect judgments for immoral

actions. We know that some interventions can reduce the bias (Hafenbrack,

Kinias & Barsade, 2014; Northcraft & Neale, 1986). When considering the

stealth plane scenario, for example, more people elect to end the project

when this is framed as taking action (Feldman & Wong, 2018). Immorality

may also counteract the sunk cost bias. To illustrate, if people learn that

enhanced interrogation is futile for preventing future terrorist attacks,

the moral repugnance of these actions may counteract the tendency to

continue investing in failing courses of action. This possibility is

broadly consistent with the application of specific moral heuristics such

as “do no harm” (Rai & Fiske, 2011; for a review see Waldmann, Nagel &

Wiegmann, 2012).

To assess the impact of sunk costs on moral judgments, we conducted two

preregistered experiments. In Experiments 1A and 1B we demonstrate that

people are more willing to proceed with a fruitless, immoral course of

action when costs have been sunk. In Experiment 2 we show that sunk costs

distort people’s perception of morality by increasing how acceptable they

find actions that have received past investment. Across both experiments

we find these results in contexts where continuing with the action would

only cause further harm.

2 Experiments 1A and 1B

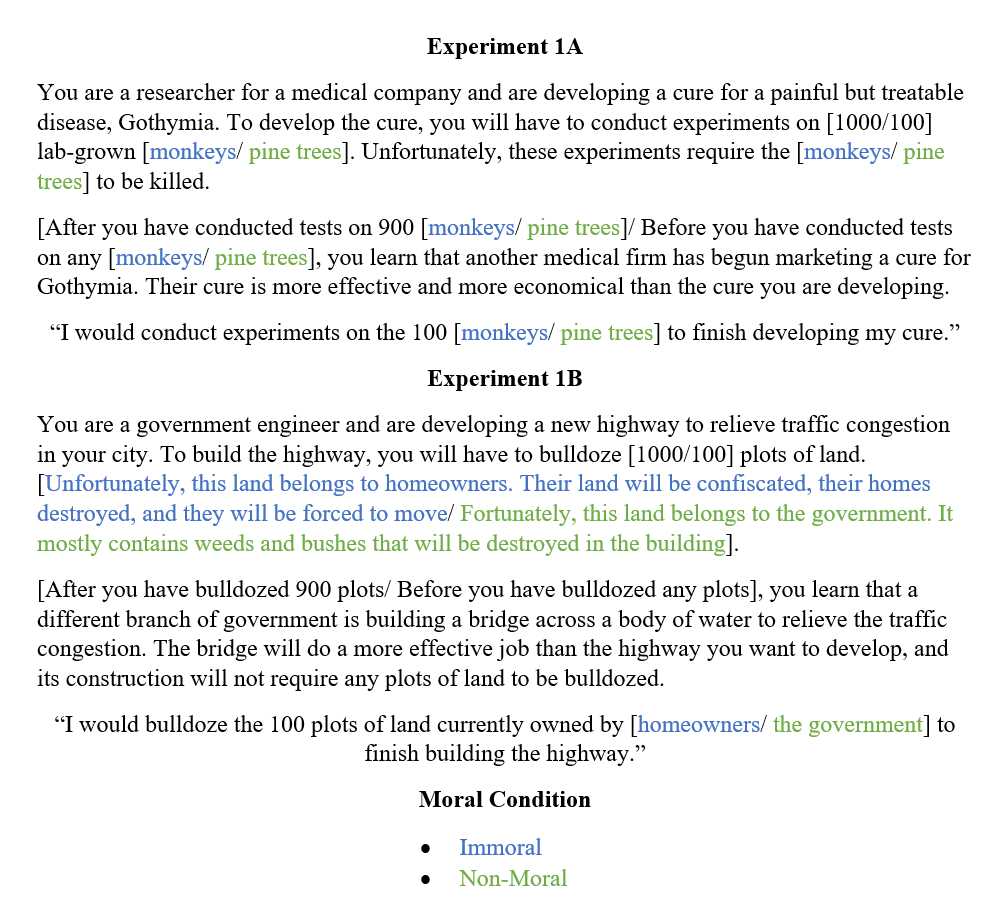

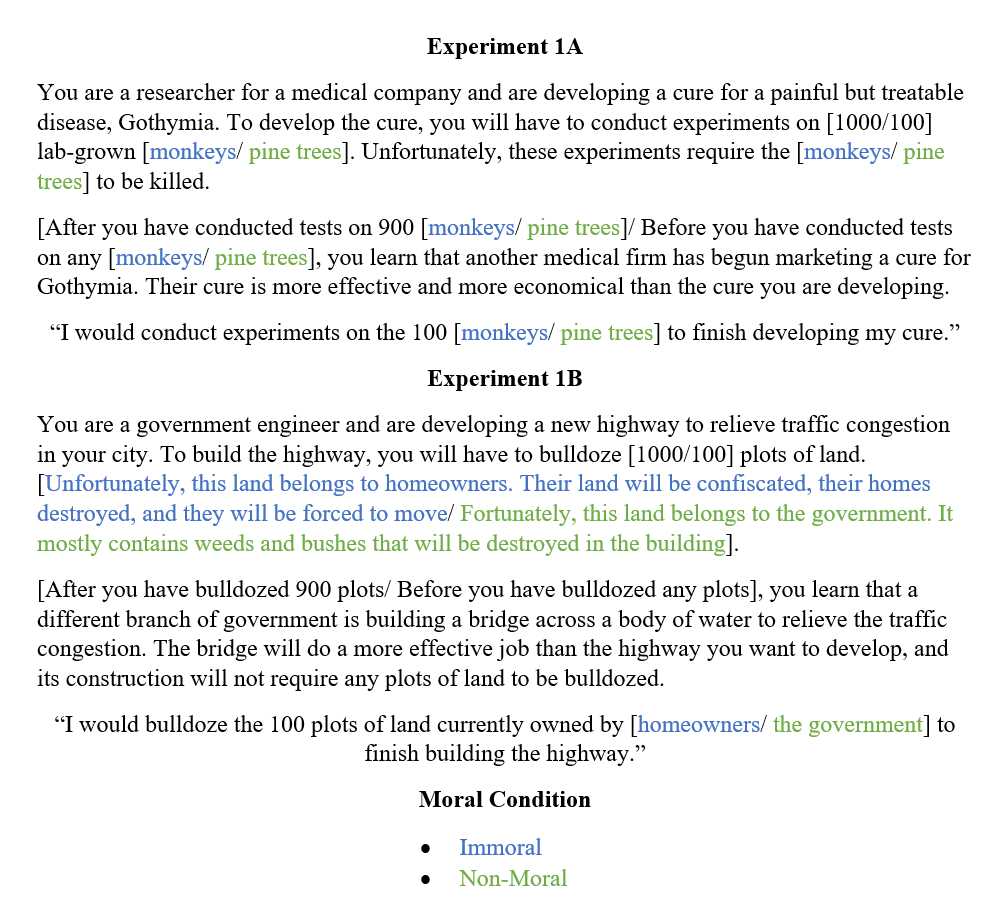

| Figure 1: Vignettes and action statement for Experiment 1A and

1B. Variations based on sunk cost condition feature black text within

square brackets. Variations based on moral condition feature colored text

(blue = Immoral, green = Non-Moral)

within square brackets. |

2.1 Method

Our data and materials are available online on the Open Science Framework

(OSF) here: https://osf.io/vxp56/. These experiments were

preregistered and the preregistrations are located here:

https://osf.io/urhda/ (Experiment 1A) https://osf.io/8n5aq/

(Experiment 1B).

2.1.1 Participants

Our samples (Experiment 1A, N = 391; Experiment 1B, N =

518) were recruited via Turk Prime (Litman, Robinson & Abberbock, 2016).

We excluded further participants (Experiment 1A, n = 212;

Experiment 1B, n = 213) who failed at least one of two

comprehension questions.1 In all studies, participants were required to be U.S. residents

and possess a Mechanical Turk HIT approval rating greater than or equal to

95%. Participants were only able to sign-up for one of the two

experiments. All studies in this article were approved by the Office of

Research Ethics at the University of Waterloo.

2.1.2 Procedure

Participants read a single first-person vignette and rated their agreement

with a proposed course of action; see Figure 1 for the materials. In each

vignette, participants were assigned an important goal (developing a cure

for a disease in Experiment 1A; building a highway to relieve traffic

congestion in Experiment 1B). However, recent information indicated the

goal was probably futile (another company had already developed a better

drug; a different government agency had already devised a better solution

to traffic congestion). Participants decided whether they should continue

pursuing the goal even though this would require expending resources.

The vignette varied across participants in a 2 X 2 between-subjects design,

manipulating: 1) Whether expending the resources required a moral violation

(killing lab monkeys; confiscating and bulldozing citizen’s houses) or did

not require this (killing farmed pine trees; bulldozing government-owned

land). 2) Whether some costs had already been sunk (many monkeys or pine

trees already killed for cure; many houses or government plots already

bulldozed) or not.2

To respond, participants read a statement asserting they would pursue the

goal (e.g., “I would conduct experiments on the 100 monkeys to finish

developing my cure.”) and rated their agreement with the action on a 1

(Strongly Agree) to 6 (Strongly Disagree) scale; we reverse coded

responses so that higher scores reflected greater agreement. Next,

participants responded to two multiple choice comprehension questions and

a few demographic questions.

| Table 1: Comparisons of Willingness-to-Act (Experiments 1A and 1B) and Moral Acceptability

(Experiment 2) across Sunk Cost conditions (Sunk, Not-Sunk). |

Experiment | Condition | t (df) | p | D [95 CI] |

1A (Cure) | Immoral | 4.71 (194) | <.001 | .68 [.39, .97] |

| | Non-Moral | 1.84 (193) | 0.034 | .26 [.02, .55] |

2.6in1B (Bulldoze) | Immoral | 6.68 (255) | <.001 | .83 [.58, 1.1] |

| | Non-Moral | 2.93 (259) | 0.004 | .36 [.12, .61] |

2 | Cure | 3.72 (435) | <.001 | .36 [.17, .55] |

| | Bulldoze | 2.46 (244) | 0.007 | .31 [.06, .57] |

| Note. Levene’s test was significant

(p < .05) for the moral conditions of both experiment

1A and 1B but when tested with a Welch correction applied the results are

nearly identical. Student’s t-tests are reported here for ease of reading.

|

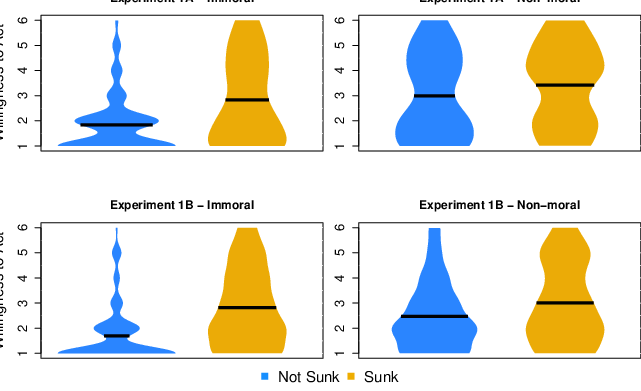

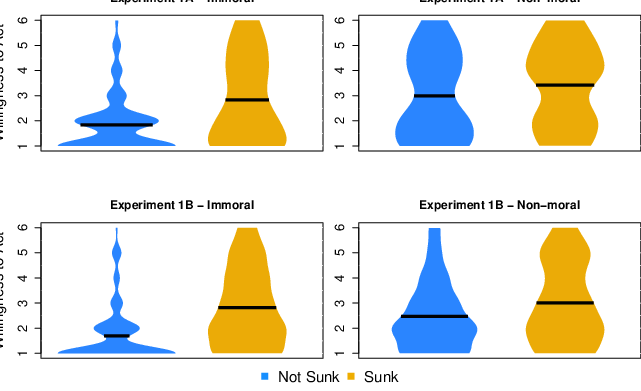

| Figure 3: The distribution of willingness to act (WTA) across immoral/non-moral and sunk/not-sunk conditions of Experiments 1A and 1B. The dark bar represents the mean

value of each condition. The width of the bars represents the number of participants

choosing that value: the wider the portion the greater the number. |

2.2 Results

We examined responses from both experiments using separate cost (sunk,

not-sunk) by morality (immoral, non-moral) ANOVAs. Both ANOVAs revealed

main effects of both factors. Willingness-to-act was greater when costs

were sunk then when they were not (Experiment 1A, F(1,

387)=20.31, p<.001, ηp2=.050; Experiment 1B, F(1,

514)=44.59, p<.001, ηp2=.080), and for non-moral than immoral

actions (Experiment 1A, F(1, 387)=30.02,

p<.001, ηp2=.050; Experiment 1B, F(1,

514)=14.63, p<.001, ηp2=.028). There was also an interaction

between these factors in Experiment 1B (highway) (F(1, 514)=5.58,

p=.019); as shown in Table 1, the sunk cost effect was larger in

the immoral condition than in the non-moral version; this interaction was

not found in Experiment 1A (Medical Cure) (F(1, 387)=3.10,

p=.079), although the pattern of data was similar (Figure 2).

In summary, Experiments 1A and 1B demonstrated that sunk costs can impact

our willingness to act not only in non-moral contexts, but also contexts

where the moral component is clear (e.g., sacrificing animals for

scientific testing). Further, we found that sunk costs may have an even

larger impact on our decision to act in an immoral context. However, this

conclusion is tentative as the financial cost of the resources differed

between the immoral and non-moral contexts (see General Discussion). These

experiments provide initial evidence that past investments can impact our

moral judgment by increasing our willingness to persist in immoral

actions.

Next, we asked whether sunk costs could make immoral actions seem less

immoral. Our test question in Experiments 1A and 1B did not directly

address this because many factors besides morality can affect willingness

to proceed with an action. For example, a person might be willing to

proceed with an immoral action if they think it is in their self-interest.

Hence, to directly focus on morality, we asked about the acceptability of

acting.

3 Experiment 2

3.1 Method

This experiment was preregistered and is available here:

https://osf.io/c4esr/.

3.1.1 Deviation from

pre-registration

Due to a coding error, twice the number of participants were recruited for

the Medical Cure condition than we had planned. No other deviations

occurred.

3.1.2 Participants

Our final sample included 683 (Bulldoze n = 246; Medical Cure

n = 437) participants recruited via Turk Prime. We excluded a

further 407 participants who failed at least one of two comprehension

questions.

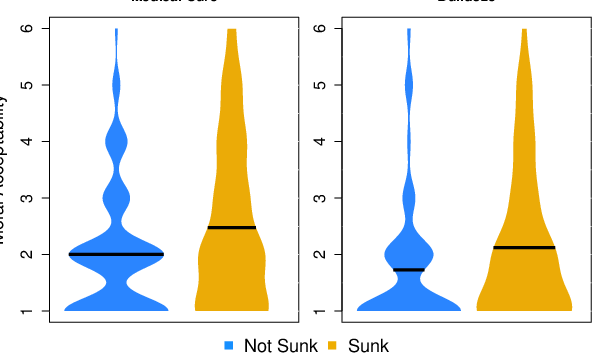

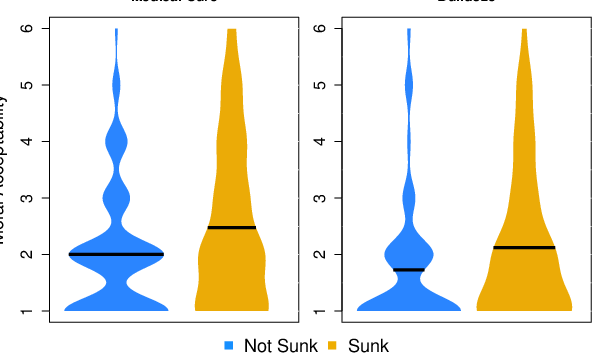

| Figure 2: The distribution of agreement with a

moral acceptability statement across the sunk cost conditions of each

vignette. The black horizontal bar represents the mean value of each

condition. The width of the bars represents the number of

participants choosing that value: the wider the portion the greater the

number. |

3.1.3 Procedure

Participants read a single vignette and then rated their agreement with the

moral acceptability of a proposed course of action. The vignette was the

immoral version of one of the two previously used vignettes (Medical Cure;

Highway) and the conditions were the same (Sunk Costs: 900/1000 resources

invested; No Sunk Costs: 0/100 resources invested). To rate moral

acceptability, participants read a statement asserting the moral

acceptability of pursuing the goal (e.g., “It would be morally acceptable

to conduct experiments on the 100 monkeys to finish developing my cure.”)

and rated their agreement with the statement on a 1 (Strongly Agree) to 6

(Strongly Disagree) scale; we reverse coded responses so that higher

scores reflected greater agreement. Participants were then asked the same

comprehension and demographic questions used previously.

3.2 Results

We examined responses from both vignettes using separate one-tailed

independent-samples t-tests. Both tests revealed sunk cost

effects (see Table 1). Moral acceptability was greater when costs were

sunk then when there were no sunk costs (see Figure 3). That is, extending

the findings from Experiment 1A and 1B, sunk costs impacted participants’

judgments of moral acceptability by increasing the moral acceptability of

an immoral action.

4 General Discussion

We found that the sunk cost bias extends to moral judgments. When costs

were sunk, participants were more willing to proceed with a futile,

immoral action compared to when costs were not sunk. For example, they

were more willing to sacrifice monkeys to develop a medical cure when some

monkeys had already been sacrificed than when none had been. Moreover,

people judged these actions as more acceptable when costs were sunk.

Importantly, these effects occurred even though the benefit of the

proposed immoral action was eliminated.

Our findings illustrate a novel way that the past can impact moral

judgment. Moral research conducted to-date has focused extensively on

future consequences (e.g., Baez et al., 2017; Miller & Cushman, 2013). Although

this makes normative sense as only the future should be relevant to

decisions, it is well known that choice is affected by irrelevant factors

like past investment (Kahneman, 2011; Kahneman, Slovic & Tversky, 1982;

Szaszi, Palinkas, Palfi, Szollosi & Aczel, 2018; Tversky & Kahneman,

1974). As such, our findings show that as is true with other (non-moral)

judgments, people’s moral judgments are affected by factors that rational

agents “should” ignore when making them.

Further, our findings show that a major decision bias (i.e., the sunk cost

effect) extends to moral judgment. This finding is broadly consistent with

research showing that moral judgments are affected by such biases. This

earlier work shows that when making moral judgments, people are sensitive

to how options are framed (e.g., Shenhav & Greene, 2010) and prefer acts

of omission over commission (e.g., Bostyn & Roets, 2016). For example,

people make different moral judgments when the decision is presented in a

gain frame than when it is presented in a loss frame, even though these

two decisions are logically identical (Kern & Chugh, 2009). Likewise,

people judge lying to the police about who is at fault in a car accident

(a harmful commission), to be more immoral than not informing the police

precisely who is at fault (a harmful omission) (Spranca, Minsk & Baron,

1991). However, unlike most of these previous demonstrations, our findings

directly compare the presence of decision-making biases across moral and

non-moral contexts (also see Cushman & Young, 2011).

In our first experiment, we also found that the sunk cost bias may be

stronger in moral decision-making than in other situations. This is

surprising. In non-moral cases proceeding with a futile course of action

is wasteful. But in our moral version of the scenarios, proceeding is

wasteful, harmful to others, and morally wrong. Yet, there was a greater

discrepancy between willingness to act in response to sunk costs in the

immoral condition. Increasing the reasons to not proceed with the action

amplified the sunk cost bias. One potential explanation for this is that

people are unwilling to admit their prior investments were in vain

(Brockner, 1992). People succumb to the sunk cost bias in part because

they feel a need to justify their past decisions as correct (Ku, 2008;

also see Staw, 1976). Likewise, moral judgments seem to generate a much

greater need to provide reasons to justify past decisions (Haidt, 2012).

Thus, those making decisions in an immoral context might have additional

pressures to justify their previous choice that stem from the nature of

moral judgment itself.

Another explanation is that the initial investment was of a larger

magnitude in the immoral compared to the non-moral condition. In both

cases, participants incurred an economic cost, but only in one did

participants incur an additional moral cost. People are more likely to

succumb to the sunk cost bias when initial investments are large (Arkes &

Ayton, 1999; Arkes & Blumer, 1985; Sweis et al., 2018). Perhaps sunk

costs exerted a greater effect in the immoral condition because the past

investments were greater (i.e., of two kinds: economic and moral, rather

than just one: economic). However, as we do not know if the economic

resources (e.g., pine trees and lab monkeys) were of comparable value, the

discrepancy between moral conditions may entirely stem from the lab

monkeys being valued higher and thus larger in investment magnitude. Thus,

we are hesitant to draw any strong conclusion from this finding. The

difference in sunk cost magnitude could stem from differences in financial

costs between the immoral and non-moral contexts.

Our finding that moral violations led to increased willingness to act is

reminiscent of the “what the hell” effect, in which people who violate

their diet then give up on it and continue to overindulge (Cochran &

Tesser, 1996; Polivy, Herman & Deo, 2010). We see this as similar to

persisting in an immoral course of action after costs have been sunk.

After engaging in a morally equivocal act, people may feel disinhibited

and willing to continue the act even when its immorality becomes clear.

Likewise, people may persist in an attempt to maintain the status quo

(Kahneman, Knetsch & Thaler, 1991; Samuelson & Zeckhauser, 1988). These

accounts, though, may not explain why sunk costs changed people’s moral

perceptions. One possibility is that this resulted from cognitive

dissonance between people’s actions and their moral code (Aronson, 1969;

Festinger, 1957; Harmon-Jones & Mills, 1999). For example, sacrificing

monkeys to develop a cure may cause dissonance between not wanting to harm

but having done so. To resolve this, people might change their moral

perceptions, molding their moral code to fit their behavior.

We close by considering a broader implication of this work. The extension

of decision biases to moral judgment has been previously construed as

supporting domain-general accounts of morality that suggest moral judgment

operates similarly to ordinary judgment (Greene, 2015; Osman & Wiegmann, 2017). This is because if morality is not unique, one could

reasonably expect that a factor that affects ordinary judgment would

likewise affect moral judgment. Thus, if information irrelevant to the

decision at hand (e.g., past investments) influences whether we continue

to bulldoze land to build a highway, so too should it influence the same

bulldoze decision that requires confiscating the land. This is not

conclusive however, and our findings could be interpreted to support

domain-specific accounts instead (e.g., Mikhail, 2011). For instance, the

sunk cost bias was demonstrably larger in moral judgments. Nevertheless,

an interpretation of our results as evidence for a domain-general account

of morality must explain how the varying effect of past investment on

judgment is a difference in degree but not kind

5 References

Arkes, H. R. (1996). The psychology of waste. Journal of Behavioral

Decision Making, 9(3), 213–224.

Arkes, H. R., & Ayton, P. (1999). The sunk cost and concorde effects.

Psychological Bulletin, 125(5), 591–600.

Arkes, H. R., & Blumer, C. (1985). The psychology of sunk cost.

Organizational Behavior and Human Decision Processes,

35(1), 124–140. https://doi.org/10.1016/0749-5978(85)90049-4.

Aronson, E. (1969). The theory of cognitive dissonance: A current

perspective. Advances in Experimental Social Psychology.

4, 1–34.

Armstrong, R. W., Williams, R. J., & Barrett, J. D. (2004). The impact of

banality, risky shift and escalating commitment on ethical decision

making. Journal of Business Ethics, 53(4), 365–370.

https://doi.org/10.1023/B:BUSI.0000043491.10007.9a.

Baez, S., Flichtentrei, D., Prats, M., Mastandueno, R., García, A. M.,

Cetkovich, M., Cetkovich, M., & Ibáñez, A. (2017) Men, women … who cares? A population-based study on sex differences and gender roles in empathy and moral cognition. PLoS ONE. 12(6). https://doi.org/10.1371/journal.pone.0179336.

Blanken, I., van de Ven, N., & Zeelenberg, M. (2015). A meta-analytic

review of moral licensing. Personality and Social Psychology

Bulletin, 41(4), 540–558.

https://doi.org/10.1177/0146167215572134.

Bostyn, D. H., & Roets, A. (2016). The morality of action: the asymmetry

between judgments of praise and blame in the action-omission effect.

Journal of Experimental Social Psychology, 63, 19–25.

https://doi.org/10.1016/j.jesp.2015.11.005.

Brockner, J. (1992). The escalation of commitment to a failing course of

action: Toward theoretical progress. Academy of Management

Review, 17(1), 39–61.

https://doi.org/10.5465/amr.1992.4279568.

Cochran, W., & Tesser, A. (1996). The “what the hell” effect: Some effects

of goal proximity and goal framing on performance. In Striving and

feeling: Interactions among goals, affect, and self-regulation. (pp.

99–120). Hillsdale, NJ, US: Lawrence Erlbaum Associates, Inc.

Cushman, F., & Young, L. (2011). Patterns of moral judgment derive from

nonmoral psychological representations. Cognitive Science,

35(6), 1052–1075.

https://doi.org/10.1111/j.1551-6709.2010.01167.x.

Cushman, F., Young, L., & Hauser, M. (2006). The role of conscious

reasoning and intuition in moral judgment: Testing three principles of

harm. Psychological Science, 17(12), 1082–1089.

https://doi.org/10.1111/j.1467-9280.2006.01834.x.

Dedman, B. (2006). Gitmo interrogations spark battle over tactics: The

inside story of criminal investigators who tried to stop abuse.

[http://www.msnbc.msn.com]. (2006, October 24)

Feldman, G., & Wong, K. F. E. (2018). When action-inaction framing leads

to higher escalation of commitment: A new inaction-effect perspective on

the sunk-cost fallacy. Psychological Science, 29(4),

537–548. https://doi.org/10.1177/0956797617739368.

Festinger, L. (1957). A theory of cognitive dissonance. In A theory

of cognitive dissonance. Stanford University Press.

Greene, J. D. (2015). The Rise of Moral Cognition. Cognition,

135, 39–42. https://doi.org/10.1016/j.cognition.2014.11.018.

Greene, J., & Haidt, J. (2002). How (and where) does Moral Judgment Work?,

Trends in Cognitive Science, 6(12), 517–523.

Hafenbrack, A. C., Kinias, Z., & Barsade, S. G. (2014). Debiasing the mind

through meditation: mindfulness and the sunk-cost bias.

Psychological Science, 25(2), 369–376.

https://doi.org/10.1177/0956797613503853.

Haidt, J. (2012). The righteous mind: Why good people are divided

by politics and religion. New York, NY, US: Pantheon/Random House.

Harmon-Jones, E., & Mills, J. (1999). An introduction to cognitive

dissonance theory and an overview of current perspectives on the theory.

In Science Conference Series. Cognitive dissonance:

Progress on a pivotal theory in social psychology. (pp. 3–21).

https://doi.org/10.1037/10318-001.

Kahneman, D. (2011). Thinking, fast and slow. New York: Farrar,

Straus and Giroux.

Kahneman, D., Knetsch, J. L., & Thaler, R. H. (1991). Anomalies: The

endowment effect, loss aversion, and status quo bias. Journal of

Economic Perspectives, 5(1), 193–206.

https://doi.org/10.1257/jep.5.1.193.

Kahneman, D., Slovic, P., & Tversky, A. (1982). Judgment under

uncertainty: Heuristics and biases. Cambridge University Press.

Kanodia, C., Bushman, R., & Dickhaut, J. (1989). Escalation errors and the

sunk cost effect: An explanation based on reputation and information

asymmetries. Journal of Accounting Research, 27(1), 59.

https://doi.org/10.2307/2491207.

Kern, M. C., & Chugh, D. (2009). Bounded ethicality: The perils of loss

framing. Psychological Science, 20(3), 378–384.

https://doi.org/10.1111/j.1467-9280.2009.02296.x.

Ku, G. (2008). Learning to de-escalate: The effects of regret in escalation

of commitment. Organizational Behavior and Human Decision

Processes, 105(2), 221–232.

https://doi.org/10.1016/j.obhdp.2007.08.002.

Litman, L., Robinson, J., & Abberbock, T. (2017). TurkPrime.com: A versatile crowdsourcing data acquisition platform for the behavioral sciences. Behaviour Research Methods. 49(2) 433–442. https://doi.org/10.3758/s13428-016-0727-z.

Mikhail, J. (2011) Elements of moral cognition: Rawls’ linguistic

analogy and the cognitive science of moral and legal judgment. Cambridge

University Press.

Miller, R., & Cushman, F. (2013). Aversive for me, wrong for you:

First‐person behavioral aversions underlie the moral condemnation of

harm. Social and Personality Psychology Compass, 7(10):

707–718. https://doi.org/10.1111/spc3.12066.

Monin, B., & Miller, D. T. (2001). Moral credentials and the expression of

prejudice. Journal of Personality and Social Psychology,

81(1), 33–43. https://doi.org/10.1037/0022-3514.81.1.33.

Mullen, E., & Monin, B. (2016). Consistency versus licensing effects of

past moral behavior. Annual Review of Psychology. 67, 363–385.

https://doi.org/10.1146/annurev-psych-010213-115120.

Northcraft, G. B., & Neale, M. A. (1986). Opportunity costs and the

framing of resource allocation decisions. Organizational Behavior

and Human Decision Processes, 37(3), 348–356.

https://doi.org/10.1016/0749-5978(86)90034-8.

Osman, M., & Wiegmann, A. (2017). Explaining moral behavior: A minimal

moral model. Experimental Psychology, 64(2), 68–81.

https://doi.org/10.1027/1618-3169/a000336.

Polivy, J., Herman, C. P., & Deo, R. (2010). Getting a bigger slice of the

pie. Effects on eating and emotion in restrained and unrestrained eaters.

Appetite, 55(3), 426–430.

https://doi.org/10.1016/j.appet.2010.07.015.

Rai, T. S., & Fiske, A. P. (2011). Moral psychology is relationship

regulation: Moral motives for unity, hierarchy, equality, and

proportionality. Psychological Review, 118(1), 57–75.

https://doi.org/10.1037/a0021867.

Rai, T. S., & Holyoak, K. J. (2010). Moral principles or consumer

preferences? Alternative framings of the trolley problem.

Cognitive Science, 34(2), 311–321.

https://doi.org/10.1111/j.1551-6709.2009.01088.x.

Samuelson, W., & Zeckhauser, R. (1988). Status quo bias in decision

making. Journal of Risk and Uncertainty, 1(1), 7–59.

Shenhav, A., & Greene, J. D. (2010). Moral judgments recruit

domain-general valuation mechanisms to integrate representations of

probability and magnitude. Neuron, 67(4), 667–677.

https://doi.org/10.1016/j.neuron.2010.07.020.

Spranca, M., Minsk, E., & Baron, J. (1991). Omission and commission in

judgment and choice. Journal of Experimental Social Psychology,

27(1), 76–105. https://doi.org/10.1016/0022-1031(91)90011-T.

Staw, B. M. (1976). Knee-deep in the big muddy: a study of escalating

commitment to a chosen course of action. Organizational Behavior

and Human Performance, 16(1), 27–44.

https://doi.org/10.1016/0030-5073(76)90005-2.

Street, M. D., Robertson, C., & Geiger, S. W. (1997). Effects of

escalating making: The effects of escalating commitment. Journal

of Business Ethics, 16(11), 15–32.

Street, M., & Street, V. L. (2006). The effects of escalating commitment

on ethical decision-making. Journal of Business Ethics,

64(4), 343–356. https://doi.org/10.1007/s10551-005-5836-z.

Sweis, B. M., Abram, S. V., Schmidt, B. J., Seeland, K. D., MacDonald, A.

W., Thomas, M. J. & Redish, A. D. (2018). Sensitivity to “sunk costs” in

mice, rats, and humans. Science, 361(6398), 178–181.

https://doi.org/10.1126/science.aar8644.

Szaszi, B., Palinkas, A., Palfi, B., Szollosi, A., & Aczel, B. (2018). A

systematic scoping review of the choice architecture movement: Toward

understanding when and why nudges work. Journal of Behavioral

Decision Making, 31(3), 355–366.

https://doi.org/10.1002/bdm.2035.

Tversky, A., & Kahneman, D. (1974). Judgment under Uncertainty: Heuristics

and Biases. Science, 185(4157), 1124–1131.

https://doi.org/10.1126/science.185.4157.1124.

United States Senate Select Committee on Intelligence. (2014).

Committee study of the Central Intelligence Agency’s detention and

interrogation program.

UN General Assembly. (1984). Convention Against Torture and Other Cruel,

Inhuman or Degrading Treatment or Punishment. United Nations

Treaty Series (1465).

Waldmann, M. R., Nagel, J., & Wiegmann, A. (2012). Moral judgment.

The Oxford Handbook of Thinking and Reasoning, (July 2014).

https://doi.org/10.1093/oxfordhb/9780199734689.013.0019.

This document was translated from LATEX by

HEVEA.