Judgment and Decision Making, Vol. 15, No. 5, September 2020, pp. 660-684

Comparing fast thinking and slow thinking: The relative benefits of interventions, individual differences, and inferential rules

M. Asher Lawson*

Richard P. Larrick#

Jack B. Soll$

|

Research on judgment and decision making has suggested that the System 2

process of slow thinking can help people to improve their decision making

by reducing well-established statistical decision biases (including base

rate neglect, probability matching, and the conjunction fallacy). In a

large pre-registered study with 1,706 participants and 23,292 unique

observations, we compare the effects of individual differences and

behavioral interventions to test the relative benefits of slow thinking on

performance in canonical judgment and decision-making problems, compared

to a control condition, a fast thinking condition, an incentive condition,

and a condition that combines fast and slow thinking. We also draw on the

rule-based reasoning literature to examine the benefits of having access

to a simple form of the rule needed to solve a specific focal problem.

Overall, we find equivocal evidence of a small benefit from slow thinking,

evidence for a small benefit to accuracy incentives, and clear evidence of

a larger cost from fast thinking. The difference in

performance between fast-thinking and slow-thinking interventions is

comparable to a one-scale point difference on the 4-point Cognitive

Reflection Test (CRT). Inferential rules contribute unique explanatory

power and interact with individual differences to support the idea that

System 2 benefits from a combination of slower processes and knowledge

appropriate to the problem.

Keywords: debiasing, dual-system theories, reflection, rule-based reasoning, CRT

1 Introduction

With the popularity of Daniel Kahneman’s 2011 best-selling book,

Thinking, Fast and Slow, the prescription to slow down our

thinking has become increasingly common in popular translations of

judgment and decision making (JDM) research. For example, a 2016 Harvard

Business Review article argues that “the essential lesson for

competitive-strategy decision-makers is not so fast… take your time and

don’t be so sure” (Chussil, 2016). Similarly, a 2017 Harvard Business

Review article states that “reflective thinking improves decision making

by grounding it in a more integrated and coherent world view than one can

have by acting only in the moment” (Reeves, Torres & Hassan, 2017).

But is the exhortation to slow down in order to think smarter sound advice?

There are at least two reasons to be cautious about this claim and why

more study is needed. First, thinking more slowly can be helpful only if

it increases the chances of thinking more soundly about a problem. If one

does not have access to the necessary rule for solving the problem or

recognize that the rule applies in the given situation, then thinking

slower is unlikely to help (Kahneman, 2000). Second, much of the evidence

that favors slow thinking has alternative interpretations that may not

support a general prescription.

For example, one argument for slow thinking is that people who are

dispositionally-inclined to be more reflective are better at avoiding

common decision biases (Frederick, 2005; Oeschssler, Roider & Schmitz,

2009; Cokely & Kelley, 2009; Obrecht, Chapman & Gelman, 2009; Koehler

& James, 2010). However, it would be a logical leap to conclude from an

individual difference variable that less reflective people can debias

themselves by slowing down, or even that more reflective individuals reach

correct answers because they are reflective. It could be, for example,

that a common factor such as education or temperament causes people to

become both reflective and knowledgeable about the applicable rules.

Alternatively, a growing body of evidence suggests that people may reach

correct answers by having good intuitions, rather than by using

deliberation to correct bad intuitions (Thompson, Pennycook, Trippas &

Evans, 2018; Raoelison, Thompson & De Neys, 2020). Experiments are

needed to tease apart the possible causal mechanisms. Although some work

has been done, if the aim is to be able to advise laypeople on whether and

how to change their thinking style, we will argue that the requisite

experiments have previously not been conducted and published. We aim to

contribute research that can help provide an answer.

We investigate our questions using canonical JDM problems built on clear

statistical rules that are integral to answering the problem successfully.

We include a broad range of statistical reasoning problems that cue

incorrect responses. Importantly, these problems are not representative of

many real-world situations, although some behavioral tendencies such as

judging probability by representativeness (Kahneman & Tversky, 1973) are

sufficiently general that they apply across contexts. As an example of

what we mean by a statistical rule, consider the famous Linda problem

(adapted from Tversky & Kahneman, 1983).

Linda is 31 years old, single, outspoken and very bright. She majored in

philosophy. As a student, she was deeply concerned with issues of

discrimination and social justice, and also participated in anti-nuclear

demonstrations.Rank these from most (=1) to least (=3) probable.

(1) Linda is a bank teller.

(2) Linda is active in the feminist movement.

(3) Linda is a bank teller and is active in the feminist movement.

Most people rank option 3 as more probable than option 1 despite the

normative rule that the conjunction of two events can be no more probable

than either of its constituent parts. Failure to recognize that option 1 is

more probable is known as the conjunction fallacy. Why do people succumb to

it? The standard explanation is that they apply the representativeness

heuristic, which in this example entails substituting their perception of

the similarity between Linda’s description and the stereotype of a bank

teller for a probability, which would require more effort to work out

(Kahneman, 2011). Among those who commit the fallacy, however, some may

have access to the basic conjunction rule and others may not. For an

example of the latter, if you ask people “which is more probable, (1) a

staircase has five steps, (2) a staircase is carpeted, or (3) a staircase

has five steps and is carpeted?” some people may rate statement 3 as more

likely than 1 or 2 because they do not think in terms of conjunctions.

Slow thinking might help someone on the Linda problem who can access the

conjunction rule, prompting them to check whether their similarity-based

judgment is valid. However, for someone who does not have access to the

rule, slow thinking might only lead them to more diligently and carefully

apply faulty heuristic reasoning, as they lack access to the rule necessary

to improve accuracy. Individuals’ likelihood of accessing and applying

rules will vary significant across contexts and domains: Access to

inferential rules is a matter of degree (Flavell, 1971). The two-question

measure of each specific inferential rule we use in our experiment serves

to measure whether participants had some minimal access to the relevant

rule, without which it would be difficult to answer the harder JDM

questions.

2 Literature review and theoretical development

There is a long tradition in research on judgment and decision making of

examining the cognitive processes that lead individual judgments to

deviate systematically from a normative standard. For nearly as long, the

field has also considered how decision makers might be helped to make

better decisions. Different research programs have proposed different

approaches. In the 1980s, Nisbett and colleagues examined whether

knowledge of simple rules could help people make better decisions (Nisbett

& Ross, 1980; Kunda & Nisbett, 1986; Nisbett, Fong, Lehman & Cheng,

1987). From the 1990s to present day, dual process theories of decision

making have argued for the benefits of slower, more deliberate thinking

(System 2) as a means to make better decisions (Sloman, 1996; Stanovich &

West, 1999, 2000; Kahneman & Frederick, 2002; Evans &

Stanovich, 2013). Both rule-based and dual-process theories offer

“internal” interventions that propose that individual cognitive processes

can be improved – either by more ready access to rules of reasoning, or

use of a different style of thinking. This contrasts with a more recent

approach to nudge people toward better decisions through “external”

situational interventions (Thaler & Sunstein, 2008).

In this paper, we build on the two internal approaches to examine the

benefits of slowing down one’s thinking for individual decision making.

Rule-based approaches to improving decision making focus on training

people in abstract rules that apply across situations (Fong, Krantz &

Nisbett, 1986; Morewedge et al., 2015). In contrast, dual process

approaches focus on differences in cognitive processes, distinguishing

rapid, automatic, and intuitive processes (System 1) from slower,

analytic, and deliberative processes (System 2) (Kahneman, 2011; Sloman,

1996; Stanovich & West, 1999, 2000; Chaiken & Trope, 1999). The two

systems are not fully distinct categories — Evans and Stanovich (2013)

have encouraged a movement away from viewing System 1 and System 2

processes in terms of defining lists of features to focusing instead on

typical correlates of each kind of process. For example, System 1 is

typically correlated with fast speeds, parallel processing, and

automaticity, whereas System 2 is typically correlated with slow speeds,

serial processing, and control. However, the only features that the

authors see as defining are that System 1 is autonomous and does not

require working memory, whereas System 2 requires working memory and

engages in mental simulation.

Originally, the dual-process framework proposed that System 2 processes

monitor System 1 outputs, detect errors, and correct them. More recent

perspectives on dual-process theory emphasize that effective reasoning is

not always corrective – processes that were traditionally characterized as

needing deliberative processing can be cued in System 1 to provide

“logical” intuitive responses (De Neys & Pennycook, 2019). Importantly,

recent approaches to dual-process theory do not emphasize that intuitive

(System 1) processes are inaccurate and deliberative (System 2) processes

accurate. A range of evidence and theorizing proposes that System 1 can

produce effective responses without correction and that System 2 can reach

poor responses despite reflection (Morewedge & Kahneman, 2010; Pennycook,

De Neys, Evans, Stanovich & Thompson, 2018). We find support for this

assertion in our data – participants’ fast responses are often accurate. We

study whether slow thinking is an effective means by which participants can

improve their reasoning.

The rule-based approach and dual process approach emphasize different

aspects of reasoning but are not mutually exclusive. As discussed earlier,

slowing down may help in general, but may also be more helpful if one has

access to the relevant rule. In fact, Sloman’s (1996) original description

of System 2, which he called “rule-based,” assumed that its greater

accuracy comes in part from accessing and using appropriate analytic rules

for solving problems. The literature has introduced the terms ‘cognitive

capital’ (Camerer & Hogarth, 1999) and ‘mindware’ (Perkins, 1995;

Stanovich & West, 2008) to capture the rules, knowledge, and thinking

styles that decision makers bring to a problem. These are both

superordinate terms that include both knowledge (such as statistical

rules) and individual differences such as thinking dispositions. For

example, Stanovich and West (2008, p. 688) characterize mindware as the

context specific “tools to think scientifically and the

propensity to do so; the tendency to think logically; and

knowledge of some special rules of formal reasoning and good

argumentation” [italics added]. In the present research, we aim to

disentangle the tools of reasoning, such as rules, from the propensity to

use them. We review the dual-process and rule-based approaches to

improving decisions, each of which emphasizes a different element of

mindware (or cognitive capital). As we proceed, we integrate the

approaches to develop a set of questions related to decision speed and

rule accessibility. Later, we present the results of a large, multistage

online experiment designed to determine the degree to which telling people

to slow down is good advice.

2.1 System 2:

Reflection, correction, and decision speed

Research using the dual-process framework has focused primarily on the

reflection property of System 2. This includes both research into the

benefits of slow thinking, and research asking whether slow thinking is

needed at all. Recent research into problem solving in JDM proposes that

deliberation plays a key role, but may be more important in choosing

between competing intuitions, rather than calculating normative responses

to substitute for an incorrect intuition (De Neys & Pennycook, 2019). In

the present research, we emphasize another aspect of System 2. In its

original description (Kahneman, 2000; Sloman, 1996; Stanovich & West,

1999), System 2 depends on rules to structure the reasoning process. We

start our review of relevant literatures by focusing on the larger, more

recent literature on reflection before turning to the older

literature on rule possession in the next section. Our empirical

tests will consider both of these properties of System 2. Not all judgment

and decision making problems rely on inferential rules, but in the present

research we focus on statistical reasoning problems with clear normative

standards that depend on a specific, corresponding rule.

Researchers have investigated the impact of fast (System 1) thinking and

slow (System 2) thinking using two main methods: individual differences in

thinking style and experimental manipulations of decision speed.

The first line of evidence uses the Cognitive Reflection Test (CRT) as an

individual difference measure. The CRT consists of three questions that

have a tempting intuitive answer that happens to be incorrect. The CRT was

originally offered as a measure of an individual difference in the

tendency to override incorrect initial intuitive answers with deliberation

(Frederick, 2005). More recently, scholars have suggested alternative

ideas about what the CRT actually measures. Baron and his colleagues

suggest that the CRT measures the degree to which one has a reflective

cognitive style — a disposition that permeates all phases of problem solving

(Baron, Scott, Fincher & Metz, 2015). Attali and Bar-Hillel (2020)

contend that the CRT measures math ability; in their studies the CRT

questions load onto the same latent construct as math questions that do

not have tempting but incorrect answers (see also Erceg, Galić &

Ružojčić, 2020, for similar conclusions).

As of this writing, exactly what aspect of cognitive style the CRT actually

measures is still hotly debated. Regardless, it is a widely used measure

and is associated with better performance in a range of reasoning problems,

such as more patient temporal discounting (Frederick, 2005; Oeschssler et

al., 2009) and greater reliance on expected values (Frederick, 2005; Cokely

& Kelley, 2009), base rates (Oeschssler et al., 2009), and the law of

large numbers (Obrecht et al., 2009). The CRT is also associated with

reduced susceptibility to framing effects (Frederick, 2005), the

conjunction fallacy (Oeschssler et al., 2009), and probability matching

behavior (Koehler & James, 2010). However, the CRT has been found to be

unrelated to anchoring effects (Oeschssler et al., 2009), frequency bias

(Obrecht et al., 2009), and the bias blind spot (West, Meserve

& Stanovich, 2012). These mixed results may be due to the source of

errors: whether errors are strategy-based, association-based, or

psychophysically-based (Arkes, 1991). For further discussion, see Toplak,

West and Stanovich’s (2011) comprehensive review of the relationship

between the CRT and performance in reasoning problems.

In the present research, we included the CRT with the expectation it would

have a positive and significant relationship to performance on our JDM

questions. Moreover, because of its common usage, the CRT provides a

useful benchmark that would allow us to compare the relative effect sizes

of movements between levels of CRT score with the effect sizes of

behavioral interventions to encourage slow thinking. The results can shed

light on whether a debiasing strategy of instructing reasoners to slow

down is able to achieve a benefit similar to those observed for

dispositional differences in reflection as measured by CRT. We also tested

whether CRT and other individual difference measures interact with a less

studied component of System 2 to enhance performance: rule accessibility.

We will discuss this more fully in the next section.

In addition to using the CRT as an individual difference measure of

thinking style, researchers have investigated the effect of thinking speed

using two kinds of between-subjects experimental manipulations. The first

paradigm compares conditions in which respondents think quickly and

intuitively to conditions in which they think slowly and analytically. The

second paradigm compares conditions in which respondents think quickly and

intuitively with a control condition. Between-subjects manipulations of

decision speed have been implemented across a wide range of problems,

including probability matching (Roberts & Newton, 2001), conjunction

fallacy (Villejoubert, 2009), belief bias (Evans & Curtis-Holmes, 2005;

Tsujii & Watanabe, 2010), conditional inference (Evans, Handley & Bacon,

2009), and syllogistic reasoning (Stupple, Ball & Ellis, 2013). They have

also been implemented with measures from a variety of applied areas,

including marketing (Cryder et al., 2017), moral decision making (Phillips

& Cushman, 2017), and economics (Rand, Greene & Nowak, 2012; Rand et al.,

2014). Generally, researchers find significant effects comparing both fast

versus slow conditions and fast versus control conditions, such that

speeding up participants hurts their performance relative to the comparison

group. Research on cognitive load has similarly found that limiting

capacity impairs performance on reasoning problems, analogous to the

effects of fast thinking (e.g., De Neys & Verschueren, 2006; De Neys,

Schaeken & d’Ydewalle, 2005; De Neys, 2006; De Neys & Schaeken, 2007).

Decision speed has also been manipulated as a within-subjects variable,

where subjects provide two sequential responses. In this paradigm,

participants respond first with a fast, intuitive answer and then

subsequently reflect on their initial response to provide a final answer

(e.g., Thompson, Prowse Turner & Pennycook, 2011; Pennycook, Cheyne, Barr,

Koehler & Fugelsang, 2014; Pennycook, Trippas, Handley & Thompson,

2013; Bago & De Neys, 2017; Koriat & Goldsmith, 1996; Shynkaruk &

Thompson, 2006). The two-response paradigm has been used to research

dual-system processes across reasoning problems, including base rates

(Pennycook & Thompson, 2012; Pennycook et al., 2013), causal reasoning,

denominator neglect, categorical syllogisms (Thompson & Johnson, 2014),

rule and belief-based reasoning (Newman, Gibb & Thompson, 2017),

syllogistic reasoning problems (Bago & De Neys, 2017), and the

bat-and-ball problem from the CRT (Bago & De Neys, 2019b).

In sum, manipulations of decision speed have focused on comparing a fast

condition with either a slow condition or a control. We do not know of any

studies that compare a slow condition to a control condition, though in a

conceptually similar line of work researchers have compared the

instructions to “think logically” with a control group and found a benefit

on performance in logical reasoning problems (Evans, Newstead, Allen &

Pollard, 1994; Daniel & Klaczynski, 2006; Vadeboncoeur & Markovits,

1999; Evans, Handley, Neilens & Over, 2010; De Neys, Schaeken & d’Ydewalle, 2005). To our

knowledge there are no papers including a fast thinking, slow thinking,

and control condition in the study of decision making problems.

Consequently, existing findings do not allow us to assess whether fast

thinking hurts performance, slow thinking benefits performance, or both.

Does slow thinking improve performance relative to a control condition in

which people think at their own, self-directed pace? We believe the answer

to this question is critical for prescriptions for improving decision

making. If interventions to encourage slow thinking are indeed helpful,

such interventions would represent a readily implementable recommendation

for improving decisions (as suggested in the recent articles mentioned in

the opening paragraph). If the disparity between fast thinking and slow

thinking is driven by the harm of being sped up, rather than the benefit

of being slowed down, encouraging slow thinking is of less value. We

believe that to make any argument about the benefits of slow thinking, we

must first seek to assess it independently of fast thinking. In a similar

argument, Payne, Samper, Bettman and Luce (2008) showed that earlier

demonstrations of the superiority of unconscious decision making were

artifacts of requiring decision makers in the conscious condition to

deviate from their normal process by taking an inordinate amount of time

to make their decision. The unconscious condition performed no better than

the control condition in which respondents used their ordinary approach to

decision making. We pose a similar question: Does slow thinking perform

better or the same as a control condition in which respondents use their

ordinary approach to decision making?

We investigated our research questions in a high powered,

pre-registered experiment with pre-registered hypotheses. We frame these

as questions rather than hypotheses – questions grounded in the literature

and to which the answers are of general interest, regardless of their

outcome. (Our pre-registration can be found at

https://osf.io/34uxz.)

We tested participants on canonical JDM problems that highlight biased

statistical reasoning. For example, we included the Linda problem from the

introduction which shows that many people commit the conjunction fallacy.

We focus on these kinds of problem because they served as foundational

demonstrations in the development of the field of JDM (Kahneman, 2011). We

include six different JDM biases in our study, including the conjunction

fallacy and others that will be described later.

Our first two questions concern whether we will replicate past research

relating CRT score to performance in canonical JDM problems, and whether

we will replicate past research comparing the performance benefits of slow

thinking versus fast thinking.

Question 1:

Are people more accurate on JDM problems when they score more

highly on individual difference measures such as the CRT?

Question 2:

Are people more accurate on JDM problems in a slow thinking

condition than in a fast thinking condition?

To test whether it is fast thinking that hurts performance, slow thinking

that benefits it, or both, we test performance in each intervention groups

against a control group in a between-subjects design.

Question 3a:

Are people less accurate on JDM problems in a fast thinking

condition compared to a control condition?

Question 3b:

Are people more accurate on JDM problems in a slow thinking

condition compared to a control condition?

Note that, based on the extensive past research we have reviewed, there is

very good reason to expect a positive answer to Question 3a. The literature

provides less guidance on Question 3b, as there has been very limited use

of a control condition in studies that attempt to manipulate the degree to

which people rely on fast thinking versus slow thinking.

It is possible that the benefit of slow thinking is more capable of being

realized when an individual has access to the appropriate rule. In the

next section, we develop questions that specify the conditional nature of

System 2’s success.

2.2 System 2: Rule-based reasoning

In Kahneman’s (2011) description of System 2, one of its primary functions

is to monitor and correct the automatic output of System 1. The ability for

System 2 to monitor and correct flawed intuition depends on an additional

feature of System 2: timely access to the relevant rule for solving the

problem. Kahneman (2000) earlier proposed, “A task will be too difficult if

(1) System 1 favors an incorrect answer, and (2) System 2 is incapable of

applying the correct rule, either because the rule is unknown or because

the cues that would evoke it are absent” (p. 682). This role for

rule-based reasoning echoes Sloman’s (1996) original theorizing. Sloman

(1996) called System 2 “the rule-based system” and argued that System 2 not

only deliberates and verifies, but also accesses abstract knowledge derived

from culture, education, and experience. Sloman (1996) described rules as

symbolic structures that state relationships between variables; variables

can take on new values in new situations (see also Smith, Langston &

Nisbett, 1992). Rules thus have the important property that they are

general — the reasoner can potentially apply them across different types of

situations if they are accessed and recognized as relevant.

To illustrate some of the challenges that people face in applying rules,

consider earlier research by Nisbett and his colleagues on statistical

reasoning (Fong et al., 1986; Nisbett et al, 1987; Nisbett,

1993; Lehman, Lempert & Nisbett, 1988; Smith et al.,

1992). This program of research argued that successful rule use depends on

multiple factors, including access to the relevant rule, an ability to

recognize when to use it, and an ability to use it in new domains with new

variables (Nisbett, Krantz, Jepson & Kunda., 1983). For example, many people have good

intuitions about sample size and regression to the mean in games of chance

and in sports. They have access to the rule that larger samples are more

reliable than smaller samples when outcomes have a clear stochastic

element. However, in other domains it can be hard to recognize the

relevance of a rule or how to apply it. Job interviews are small samples

of noisy behavior that provide an imperfect glimpse into a person’s

underlying traits. People neither recognize that behavior is a sample

drawn from an underlying distribution nor that sample size is relevant to

the problem. Linking back to the quote from Kahneman that opened this

section, it is not enough to simply engage System 2, or even to have

access to the rule. The decision problem must arrive with cues that bring

the correct rule to mind, which in turn must be recognized as the correct

rule to use in the situation. Nevertheless, having some form of access to

the rule is necessary if not sufficient for solving the problem.

In other work, researchers have used neutral versions of problems to

measure whether a decision maker has the necessary mindware to solve a

problem (Stanovich & West, 2008). The aim of a neutral problem is to

strip out content that is likely to evoke a heuristic-based, intuitive

answer that is incorrect. In this way, the neutral problem provides a pure

measure of whether or not the participant knows the rule needed to

correctly solve the problem. For example, Frey, Johnson and De Neys (2018) asked two

neutral problems about base-rates and two about conjunctions that removed

misleading stereotype information. The total score (out of four)

correlated significantly with whether subjects could be classified as a

consistent detector of conflict across base-rate and conjunction study

problems. Similarly, Šrol and De Neys (2020) constructed a mindware

instantiation index by averaging performance across eight neutral

reasoning problems. The index was significantly correlated with numeracy

and cognitive ability as measured by the Vienna matrix test (Klose,

Černochová & Král, 2002), and was also the single best predictor of

accuracy on study questions that depended upon the same measured rules.

We aim to build on this existing research by disentangling two aspects of

mindware: access to specific rules and the individual differences that

contribute to successful application of this knowledge. In our study, we

measured the accessibility of six different rules that each uniquely

corresponds to one of our six JDM problems. Each rule was measured with a

problem structure that was relatively transparent so that if subjects had

access to the rule, they would be likely to answer the rule question

correctly. These transparent questions are similar to the neutral problems

used in Frey et al. (2018) and Šrol and De Neys (2020); our

main point of departure was to test the degree to which access to specific

rules helped with directly corresponding JDM questions that were

more difficult and less transparent. We ask whether access to the relevant

inferential rule will positively predict problem performance on its

related JDM question.

Question 4:

Are people who have access to a specific inferential rule more

accurate on directly related JDM problems compared to people who do not

have access to the rule?

We are interested in the relationship between access to inferential rules

and performance in reasoning tasks, as this has implications for debiasing

interventions. As we measure access to six different rules, our design

allows us to distinguish between the effects of general rule access and

problem-specific rule access. This affords us insights to the process by

which respondents access and apply knowledge to focal problems. If rule

accessibility is tied to a general store of knowledge and not to specific

rules, then the predictive power of having access to the appropriate rule

for a JDM problem should on average be no greater than the predictive

power of having access to other rules that are necessary for other types

of JDM problems. For example, if specificity is irrelevant, then access to

the probability matching rule should be equally as predictive of success

in the Linda problem as is access to the conjunction rule. We will show

that, although general rule accessibility is predictive, access to the

specific rule is also important. We suggest that this is strong evidence

for a rule-based account in which successful reasoners retrieve and apply

abstract, necessary rules that they already know to some degree (when such

rules exist).

2.3 Rules and decision speed

The reason that instructing people to slow down might help performance in

JDM problems is that it causes them to correct or replace an erroneous but

intuitively tempting answer. This is more likely to happen if they have

sufficient access to the necessary rule for solving the problem. We

examine the combined benefit of rule knowledge and reflection by first

examining reflection as an individual disposition (as measured by CRT).

Question 5:

Does having access to a specific rule increase the benefits to

accuracy associated with having a more reflective disposition (as measured

by the CRT)?

Similarly, we ask whether our intervention to engage in slow thinking

may be of greater benefit among those with access to the inferential rule.

Question 6a:

Does having access to a specific rule increase the accuracy

benefits of a slow thinking condition relative to a control condition?

Conversely, even if one has access to the rule, one may not be able to

apply it if required to make a decision very quickly. For individuals who

have access to the rule, fast decision making may tend to prevent

application of the rule and cause them to fall back on incorrect

intuitions. An important boundary condition to this perspective is that

reasoners may have rules sufficiently encoded that they produce accurate

intuitive responses (Frey et al., 2018; Šrol & De Neys, 2020).

Broadly, people can be divided into at least three categories of knowledge:

Experts who can immediately generate good responses (Larrick & Feiler,

2015), people who do not have access to the relevant rule, and individuals

who require more deliberative processes to access and apply a rule. For

the first and second categories, performance should not be affected by

behavioral manipulations of decision speed. Experts should be able to

generate correct answers quickly. For individuals who lack access to the

rule, their decision will be poor regardless of decision speed. For the

third category, faster thinking is likely to be harmful if it disrupts

their ability to access and apply an inferential rule. We ask whether the

effect of a fast thinking manipulation varies with participants’ access to

inferential rules by testing the interaction described in Question 6b.

Question 6b:

Does having access to a specific rule increase the cost to

accuracy of a fast thinking condition relative to a control condition?

2.4 Incentives

If slower thinking improves accuracy on JDM problems, then one might argue

that providing monetary incentives for correct answers should have the

same effect. In the absence of incentives, people may take cognitive

shortcuts because the effort needed to correctly solve the problem is not

worth expending. With incentives, decision makers should be willing to

expend more effort to solve the problem correctly.

Although incentives might spur people to try harder, a number of studies

have concluded that incentives do not appreciably reduce susceptibility to

biases (see Camerer & Hogarth, 1999, for a review). This is true even

of very large incentives. Recently, Enke et al. (2020) conducted a study

in Nairobi, Kenya, where they rewarded participants with the equivalent to

one month’s salary for answering questions correctly. They tested

susceptibility to several biases, including base rate neglect and

anchoring. Not surprisingly, participants took substantially more time

when incentives were very large — a reflection of greater effort.

Nevertheless, solution rates remained largely unchanged for five of the

six tasks. Although participants may have tried harder, their performance

likely did not improve because the incentives did not instantaneously

confer the correct mental representation or rule for solving the problem

(Payne, Bettman & Johnson, 1992; Epley & Gilovich, 2005).

There was one exception to this finding — with very large incentives,

performance on the CRT increased from around 35% in the control condition

to 48% in the incentive condition. The CRT stands in contrast to many

other cognitive tests in that most people can probably access the needed

skills to solve at least one of the problems (basic addition and

subtraction are needed to solve the bat-and-ball problem). Enke et al.’s

(2020) results suggest that incentives improve performance when people have

the necessary rules and skills to produce the correct answer but do not

improve performance when rules and skills are lacking.

For the purpose of comparison, we included an incentives condition in our

experiment. Notably, Enke et al.’s (2020) participants did not take more

time when presented with standard laboratory incentives than in a no-pay

control condition. We suspect, however, that relatively small incentives

may be more effective at inducing thoughtfulness with online participants

when the incentives are non-trivial relative to the base pay. The

inclusion of an incentives condition will allow us to compare slow

thinking to incentives in terms of time taken, performance, and

interaction with rule accessibility. If the two conditions have similar

effects, it would seem reasonable to infer that incentives cause people to

slow down and think harder, much the same as we instruct people to do in

the slow thinking condition.

Question 7:

Are people more accurate on JDM problems in an incentive

condition compared to a control condition?

3 Experiment

Our primary goal in this research is to evaluate whether telling people to

slow down their thinking is good advice for canonical statistical problems

in the JDM literature. This is a different question from asking whether

people are more accurate when they think more slowly. People might slow

down on their own, for instance, when it is appropriate to do so (Payne et

al., 2008). They might also slow down due to dispositional differences

(such as those captured by high CRT scores). We are interested

specifically in whether it helps to encourage slow thinking when

respondents are acting as they would naturally. Furthermore, our review of

the literature suggests that even if the advice to slow down helps in the

aggregate, it may help people differentially depending on their individual

dispositions and the rule knowledge to which they have access. Thus, we

formulated questions relating to both main effects and interactions to ask

whether encouraging slow thinking may work better for some people than for

others.

Empirically, we will try to distinguish between knowledge and individual

differences in cognitive dispositions in our experiment. For clarity, we

will use different terms for each of these two aspects in our

operationalization: inferential rules and individual differences,

respectively. We measure accessibility to the specific, rule-based

knowledge needed to solve a focal JDM problem (e.g., the conjunction rule);

and we measure decision making disposition using individual differences

such as performance on the CRT. This will allow us to look at the effects

of our manipulations when including these variables in the model, and also

ask several additional questions. For example, we tested whether high CRT

individuals would answer more canonical JDM problems correctly, and whether

this advantage would be more pronounced when they have access to the

related statistical rule.

We designed a single, high-powered experiment that facilitates answering

our questions. Participants completed two separate stages that were

completed at least 24 hours apart. This was intended to reduce fatigue and

to mitigate any contamination of our dependent measures by our inferential

rule measures. In the first stage, participants completed the 12

inferential rule questions (6 rules x 2 measures each) and the questions

from the CRT, CRT-2 and Berlin Numeracy Test (BNT), as described in more

detail below. In Stage 2, the participants responded to the 12 JDM

questions that served as our DVs (6 JDM problems x 2 measures each). We

measured access to each inferential rule and each JDM problem twice to

reduce measurement error.

3.1 Method

3.1.1 Materials

We needed three categories of problems in order to find the answers to our

questions: problems that test for JDM biases that appear in the literature,

problems that test for access to rules, and problems that test for

individual differences in cognitive disposition. We next describe briefly

each set of problems and how they were constructed. Full experimental

materials are available in an online repository at

https://osf.io/mnvej/.

Problems to test JDM biases

To construct our battery of JDM questions, we started with 31 judgment and

decision making problems and principles from four comprehensive review

papers (Stanovich & West, 2000; Stanovich & West, 2008; Parker &

Fischhoff, 2005; Bruine de Bruine, Parker & Fischhoff, 2007). We

selected problems that had a clear normative principle to apply. This led

to the exclusion of a large number of framing problems, which test the

internal consistency of preferences across different representations as

opposed to the application of a rule. (For example, gain-loss framing

effects indicate inconsistent risk preferences, but there is no normative

answer for a given risk taking frame.) Furthermore, we focused on problems

with statistical principles over logical reasoning problems, as the former

have been more central in traditional JDM research. We caution against

generalization to broader categories of problems (e.g., non-statistical

problems) or situations (e.g., choices based on subjective preferences).

Our search process led us to select problems that illustrate six classic

JDM biases: the conjunction fallacy (Tversky & Kahneman, 1983),

probability matching (Stanovich & West, 2008), default bias (Ritov &

Baron, 1990), base rate neglect (Kahneman & Tverksy, 1973), denominator

neglect (Kirkpatrick & Epstein, 1992), and Cell A bias in covariation

problems (Wasserman, Dorner & Kao, 1990). Although we closely modeled

our problems on the ones from the above-cited sources, we modified them to

a greater or lesser extent, for three different reasons. First, we needed

problems that could be coded as clearly correct or incorrect. This created

a special issue for the base rate neglect problem, so we had to use a

revised version. Second, we needed to ensure that baseline performance in

the control condition was at least as accurate as random responding. If it

were not, any improvement in performance could potentially be explained by

an increased rate of random responding rather than more normative problem

performance. Third, our procedure called for two versions of each type of

problem to reduce measurement error. We therefore constructed a second

version of each problem keeping the same structure but changing the

content.

Problems to test access to inferential rules

A number of scholars have pointed out that to solve many JDM problems one

has to, at a minimum, have access to the relevant statistical rule

(Kahneman, 2000; Nisbett, 1993; Stanovich & West, 2008). What does it

mean to have access to a rule? One version of accessing a rule is that a

person can retrieve and apply an abstract representation of the formal

normative rule (e.g., P(A) ≤ P(A∩B) for conjunctions).

Another version is that a person has learned exemplars of the rule through

experience and generalizes from that experience when encountering new

problems. Nisbett (1993) and colleagues argued that rules can be learned

and stored at a level that is easily applied and generalized in everyday

contexts; people have a harder time learning and understanding abstract

rules stated as mathematical or logical relationships. For example, Cheng

and Holyoak (1985) showed that people can accurately test relationships

that involve permission and obligation (e.g., a customer can drink alcohol

only if she is at least 18 years of age) that they fail to evaluate

effectively when stated as an isomorphic logical principle (e.g., if p,

then q). Thus, we chose not to ask people about direct statements of

abstract rules. Instead, we measured whether a person could answer a

simple and transparent problem that requires access to the rule. Our key

goal in designing transparent problems was to minimize the presence of a

tempting but wrong intuitive answer that competes with application of the

rule.

Consider the Linda problem described in the introduction. This problem

requires access to the conjunction rule. However, it also has a tempting

incorrect answer because people tend to substitute assessments of

similarity for probability. The key to testing access to the conjunction

rule in this case is to remove the presence of a tempting incorrect

answer. Consider the following Sally problem.

Imagine Sally owns a car. Rank the following from most likely (=1) to least likely (=3):

(1) The car has Bluetooth speakers.

(2) The car is painted green.

(3) The car has Bluetooth speakers and is painted green.

Compared to Linda, there is less material provided with which to form an

impression of Sally and the types of activities she might engage in. The

Sally problem is transparent, so we count a person as having some access

to the rule if he or she judges the third option as the least likely.

We included two transparent questions measuring rule accessibility for each

of our six JDM problem types to reduce measurement error of rule

accessibility. Of course, these do not provide a complete picture of a

participant’s ability to retrieve and apply a rule across contexts. Yet, if

a participant cannot answer questions accurately in these two transparent

contexts, we argue that other individual differences such as a disposition

to reflect are less helpful for correctly answering standard JDM questions

in which intuition provides a conflicting response.

Problems to test individual differences

We measured individual differences primarily so that we could include these

variables in our regression analysis when measuring the effects of fast and

slow thinking. We were also interested in exploring certain

interactions. For example, it is plausible that low CRT individuals would

benefit more from a slow-thinking intervention because high CRT individuals

already tend to engage in reflection. Including scales such as the CRT

provided the opportunity to test for this. We included three scales: the

three-question CRT (Frederick, 2005), the four-question CRT-2 (Thomson &

Oppenheimer, 2016), and the four-question BNT (Cokely, Galesic, Schulz,

Ghazal & Garcia-Retamero, 2012).

The CRT is intended to assess a respondent’s disposition to reflect on and

potentially correct automatic System 1 responses. However, to perform well

on the CRT, respondents must not only have the disposition to reflect, but

they must also possess the knowledge to detect a possible need for

correction and then implement that correction (Kahneman, 2000). As the

problems on the CRT involve calculations (albeit relatively simple ones),

the CRT likely has a numerical reasoning component. To directly assess a

tendency to reflect, we also included the CRT-2 (Thomson & Oppenheimer,

2016), which does not depend on performing calculations to reach the

correct answers. Finally, we included the Berlin Numeracy Test (Cokely et

al., 2012). Many JDM problems are highly numeric and depend on such

reasoning skills, so variation in numeracy would likely add noise to

whether or not participants correctly solve a given JDM problem. Our

primary motivation for including the BNT was so that we could include this

variable in our regression analysis when studying the relationship between

our behavioral interventions and the rate of successful problem solving.

3.1.2 Participants

We recruited participants with US IP addresses using Amazon’s Mechanical

Turk platform (mTurk). Prospective participants were informed that they

should participate only if they intended to complete both stages. In Stage

1 we collected 2,199 responses, excluding 203 participants for failing an

attention check and 1 participant for failing to consent to return to

Stage 2 of the study. For Stage 2 we invited 1,995 participants to return,

collecting 1,869 responses. In Stage 2 we removed 147 participants who

failed an attention check, and 7 who stated that they had not completed

Stage 1. Both of these checks occurred at the start of the survey, prior

to random assignment to a condition. Of the remaining 1,715 observations,

we could match 1,709 participants’ Stage 2 responses to their responses in

Stage 1. We excluded 3 participants for not answering a question from

Stage 1. The resulting sample of 1,706 participants formed the basis of

our study.

The study was posted in four batches (i.e., separate HITs in mTurk) of 500

people each in October 2019. Each HIT had a similar attrition rate between

stages (12–16%). Stage 2 of each 500-person batch was made available 24

hours after the last member of the group completed Stage 1 and remained

accessible for one week. On average, participants waited about two days

before starting Stage 2 (M = 53.0 hours, SD = 24.1). The compensation for

Stage 1 of the study was $1.00. The compensation for Stage 2 was $1.50,

plus a bonus of $0.50 for returning to complete both stages, bringing

total compensation to $3.00. Our sample was 45.6% female.

3.1.3 Procedure

Procedure: Stage 1.

Participants were informed that this study was Stage 1 of

a two-stage study, and that they would be invited back to complete the

second stage for additional income 24 hours after the initial HIT was

completed. Participants were asked to identify their willingness and

ability to return for Stage 2 at both the start and the end of the Stage 1

study.

The inferential rule questions were divided into two blocks of six, with

each block containing one question for each rule. Participants began the

study by completing one block of inferential rule questions. Which block

they saw first was counterbalanced, as was the question order they saw

within a block. Each participant saw questions in one of six possible rule

orders that followed a Latin square design. These rule orders were the same

in each block. For example, if the question associated with a given rule

was presented in the kth position in the first block,

the other question associated with the same rule was presented in the

kth position in the second block.

After completing the first block, participants answered the 11 questions

from the CRT, CRT-2, and BNT in a randomly presented order, determined by

Qualtrics for each participant. Following this, they answered the six

questions from the other inferential rule block. There were no transitions

between these different parts of the study, so from the participants’ point

of view they simply answered a sequence of 23 questions, each presented on

a separate screen. Some questions contained multiple responses (e.g., the

probability matching rule question). When a question contained multiple

responses, all of these responses were placed on the same screen. After

completing all the questions, participants reported their sex, age, and

level of education. They also reported their mTurk identification number so

that we could contact them for Stage 2.

Procedure: Stage 2.

Using TurkPrime’s inclusion function (Litman, Robinson & Abberbock,

2016), we invited all the participants who completed Stage 1 back for

Stage 2 of the study. The JDM problems were divided into two blocks of

six, with each block containing one of each of the six types of problems

(the conjunction fallacy, probability matching, default bias, base rate

neglect, denominator neglect, and Cell A bias in covariation problems), in

a similar Latin square setup to Stage 1. As in Stage 1, each question

appeared on its own screen. There were no filler questions between the two

blocks.

Participants were randomly assigned to a problem type order condition that

determined the order of the questions in each block (following a Latin

square design), a block order condition, and a study condition.1 The study

conditions were control, Slow, Fast, Fast-slow

(within-subjects), and Incentive. After being assigned to a study

condition, participants were presented with instructions for the

study. These included the behavioral manipulation associated with their

study condition. We adapted the text for our conditions from previous work

studying the impact of fast and slow responding (Bago & De Neys, 2017).

Participants began by reading the instructions for their condition. These

instructions were as follows:

-

- Control Condition.

In this task we’ll present you with a set of problems. We will ask you to

respond to each problem with your best answer.

As each problem is presented, you can take all the time you want to

indicate your response. It is important that you give your best responses

to all the problems.

- Slow Condition.

In this task we’ll present you with a set of problems. We will ask you to

respond to each problem after actively reflecting on it.

As each problem is presented, you can take all the time you want to

actively reflect on it. Once you have made up your mind, you will then

enter your final response. You will have as much time as you need to

indicate your answer. As you read each problem, think about the possible

answers to the problem and select the one that you feel is most likely to

be correct. It is really crucial that you give your response after

reflecting on each problem deeply.

- Fast Condition.

In this task we’ll present you with a set of problems. We will ask you to

respond to each problem with your initial, intuitive answer.

As each problem is presented, you should answer with your initial

response—the very first answer that comes to mind. You don’t need to think

about it. Just give the first answer that intuitively comes to mind as

quickly as possible. It is really crucial that you give your first,

initial response as fast as possible.

- Fast-slow Condition.

In this task we’ll present you with a set of problems. We will ask you to

respond to each problem twice. First, respond with your initial, intuitive

answer. After submitting this answer, please respond to each problem again

after actively reflecting on it.

As each problem is presented, you should answer with your initial

response—the very first answer that comes to mind. You don’t need to think

about it. Just give the first answer that intuitively comes to mind as

quickly as possible. It is really crucial that you give your first,

initial response as fast as possible. After this, you can take all the

time you want to actively reflect on it. Once you have made up your mind,

you will then enter your final response. You will have as much time as you

need to indicate your answer. As you read each problem, think about the

possible answers to the problem and select the one that you feel is most

likely to be correct. It is really crucial that you give your final

response after reflecting on each problem deeply.

- Incentive Condition.

In this task we’ll present you with a set of problems. You will be paid a

bonus of $0.50 for a correct answer in a randomly selected question.

As each problem is presented, you should answer with the response that

gives you the best chance of earning the bonus payment. Once you have made

up your mind, you will enter your final response. You can take all the

time you want to indicate your response. It is really crucial to answer

problems correctly to increase your chances of winning the bonus payment.

| Table 1: The two inferential rule questions (Stage 1) and two JDM questions

(Stage 2) for the conjunction fallacy problem. |

| Measure | Inferential rule question | JDM question |

| 1 | Imagine Sally owns a car.

Rank the following from most likely (=1) to least likely (=3):

-

The car has Bluetooth speakers

- The car is painted green

- The car has Bluetooth speakers and is painted green

| Linda is 31 years old, single, outspoken and very bright. She majored in philosophy. As a student, she was deeply concerned with issues of discrimination and social justice, and also participated in anti-nuclear demonstrations.

Please rank the following five statements by their probability of being

true. (1 = most probable, 5 = least probable)

-

Linda is active in the feminist movement.

- Linda is a bank teller.

- Linda is a bank teller and is active in the feminist movement.

- Linda works in a bookstore and takes Yoga classes.

- Linda is an insurance salesperson

|

| 2 | Imagine Bill likes to play darts.

Rank the following from most likely (=1) to least likely (=3):

-

Bill misses the dartboard on his first throw.

- Bill is wearing a red sweater.

- Bill misses the dartboard on his first throw and is wearing a red

sweater.

| Bill is 34 years old. He is intelligent, but unimaginative,

compulsive, and generally lifeless. In school, he was strong in mathematics

but weak in social studies and humanities.

Please rank the following five statements by their probability of being

true. (1 = most probable, 5 = least probable)

-

Bill is an accountant.

- Bill plays jazz for a hobby.

- Bill is an accountant who plays jazz for a hobby.

- Bill surfs for a hobby.

- Bill is an architect.

|

Manipulations

To reinforce the manipulations, participants were asked to respond to an

open-ended question asking for their interpretation and understanding of

the manipulation. Before beginning to answer the problems, participants

were asked to state how willing they were to comply with the experimental

instructions, for example, ‘How willing are you to keep answering each

problem as fast as possible with the first answer that comes to mind, then

answering again after reflecting on the problem deeply?’ Participants

indicated on a sliding scale from 0 to 100 how willing they were to

continue to comply with these instructions. After completing their first

block of six JDM questions, participants were then asked to recommit to

the experimental instructions. Participants then continued to answer the

second block of six JDM questions.

Full details of the study manipulations are included in the supplement. The

structure for the study was similar to that of Stage 1. Of the resulting

variables, Order denotes one of the six Stage 2 problem type

orders from the Latin square, Block Order denotes whether the

focal question was presented in the first or second block of JDM

questions, and Measure denotes which of the two measures the

observation corresponds to within a problem type (e.g., there were two

conjunction fallacy problems: the Linda question, or the Bill the

accountant question, Table 1). Our initial analyses focused on comparisons

among control, Fast, Slow, and Incentive conditions (n =

1471). (Subsequent analyses include tests using the Fast and

Slow responses from the Fast-slow within-subjects

condition.)

3.2 Results

3.2.1 Analytical approach

We used dummy-coded condition variables and continuous individual

difference variables to predict performance on our JDM problems at a

question level. Each participant provided 12 responses to JDM problem

questions. Responses to the three questions of the CRT (Frederick, 2005)

were coded into a score between 0 and 3. Responses to the four questions

of the CRT-2 (Thomson & Oppenheimer, 2016) were coded into a score

between 0 and 4. Responses to the four questions of the Berlin Numeracy

Test (Cokely et al., 2012) were coded into a score between 0 and 4. We

constructed a variable RuleSpecific that took the

value of 0, 1 or 2, depending on how many of the inferential rule

questions were answered correctly for a specific problem type. Note that

Stage 1 measures of the specific inferential rules (two questions such as

the Sally and Bill-playing-darts problems presented above) were measured

independently of and at least 24 hours before Stage 2 measures of a JDM

problem (two questions such as the Linda problem presented above). A

participant’s score for the two measures that make up

RuleSpecific were used to predict their

performance on the two JDM problems that make up the DV corresponding to

that inferential rule. Inferential rules, CRT, CRT-2, and BNT were

mean-centered across the full sample (including the Fast-slow

observations) for all of our analyses. Our dependent variable was whether

or not a question was answered correctly.

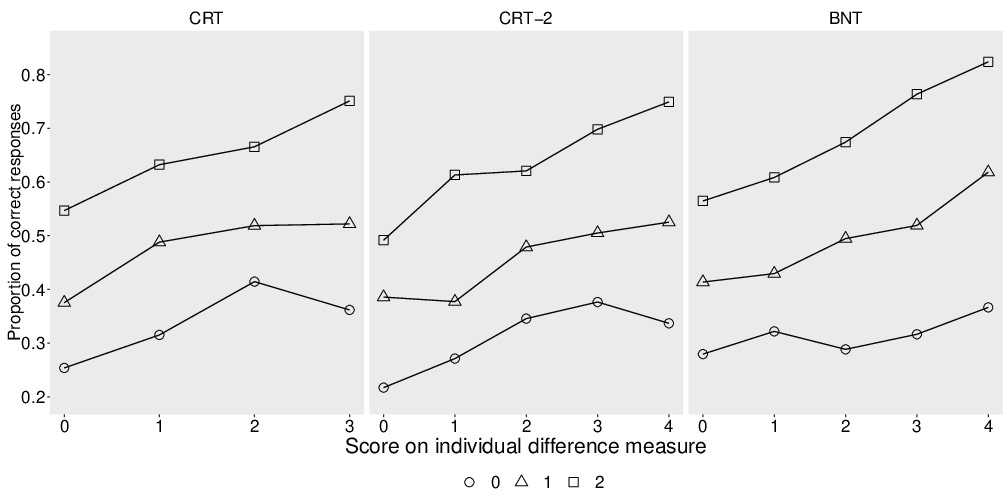

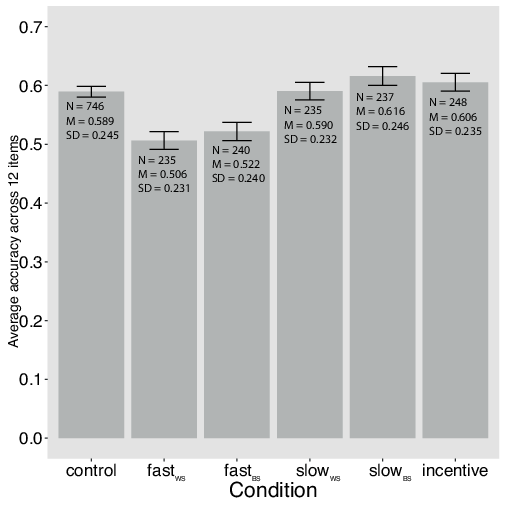

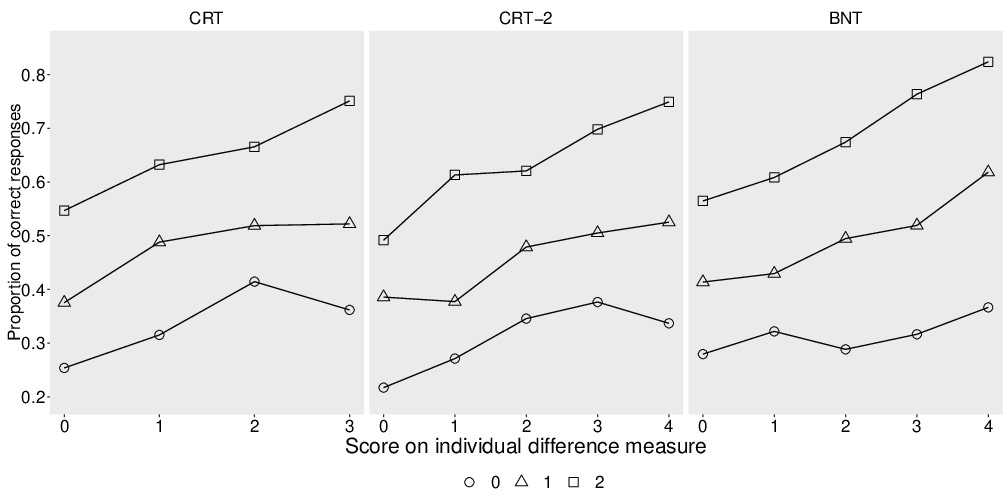

| Panel A | | Panel B |

| |  |

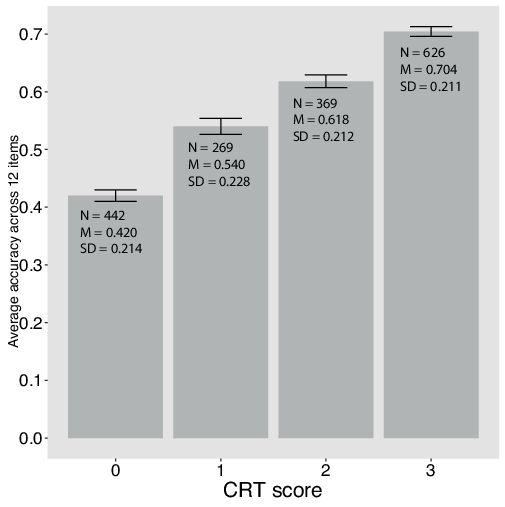

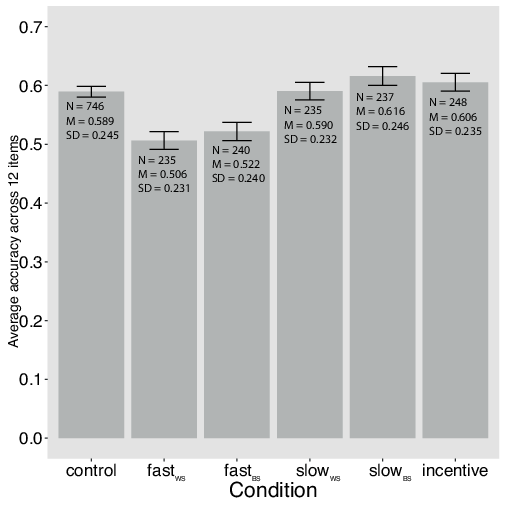

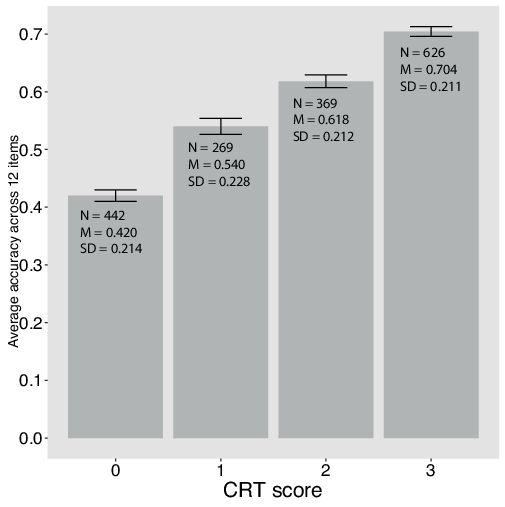

Figure 1: The average accuracy across 12 problems by CRT score (Panel A)

and study condition (Panel B).

Note. Error bars indicate plus or minus 1 standard error. The cell

size and mean and standard deviation of the percentage of JDM questions

answered correctly is indicated on the bars. fastWS refers

to the within-subjects fast response, fastBS to the

between-subjects fast condition, slowWS refers to the

within-subjects slow response, and slowBS to the

between-subjects slow condition. |

For our analyses, we used Generalized Estimation Equations (GEE) to

estimate logistic regression models. A GEE is used to estimate the

parameters of a generalized linear model where outcomes may be correlated

(e.g., responses nested within a subject). We clustered these data by

participant. To conduct this analysis, we used the ‘geepack’ package

(Hojsgaard, Halekoh & Yan, 2016) for R. We specified a binomial

distribution family with a logit link, as our outcome data is binary, and

specified an ‘exchangeable’ correlation between responses, following

guidelines to minimize the Quasi Information Criterion (Hardin

& Hilbe, 2003; Pan, 2001; Hin & Wang, 2009).

Our data contained two measures of each participant’s response to each of

the six JDM problems. We included a Problem effect (for each of

the 6 JDM problems) and a Measure effect for whether the problem

answered was the first measure or the second (e.g., Linda the bank teller

or Bill the accountant, right column, Table 1). We decomposed the

Order variable into five dummy-coded Position effect

variables (e.g., a dummy variable for third position would take the value

of 1 if this question was presented in position 3 in its block, 0

otherwise), using first position as the omitted category. We also included

a Block Order variable, signifying whether the question was in

the block of JDM questions presented first or second. We do not report

these nuisance factors in the main manuscript, but the full model

estimates can be seen in the supplement.

| Table 2: Logistic regressions predicting success with intervention

conditions coded relative to control and with individual differences mean

centered. |

| Model | 1 | 2 | 3 | 4 | 5 |

| (Intercept) | -0.452*** | -0.470*** | -0.455*** | -0.448*** | -0.478*** |

| | (0.071) | (0.073) | (0.074) | (0.072) | (0.074) |

| Slow | 0.126 | 0.155† | 0.139† | 0.131 | 0.155* |

| | (0.085) | (0.080) | (0.083) | (0.083) | (0.079) |

| Fast | -0.314*** | -0.371*** | -0.329*** | -0.358*** | -0.377*** |

| | (0.080) | (0.077) | (0.077) | (0.079) | (0.076) |

| Incentive | 0.065 | 0.116 | 0.115 | 0.071 | 0.121 |

| | (0.081) | (0.079) | (0.077) | (0.077) | (0.075) |

| Education | 0.035 | -0.006 | 0.037 | -0.014 | -0.016 |

| | (0.026) | (0.024) | (0.025) | (0.025) | (0.024) |

| CRT | | 0.446*** | | | 0.256*** |

| | | (0.023) | | | (0.028) |

| CRT-2 | | | 0.418*** | | 0.201*** |

| | | | (0.024) | | (0.029) |

| BNT | | | | 0.410*** | 0.241*** |

| | | | | (0.027) | (0.028) |

| N | 17,652 | 17,652 | 17,652 | 17,652 | 17,652 |

| Note. *** p < 0.001, ** p < 0.01, * p

< 0.05, † p < 0.10. |

| The coefficient estimates for Measure,

Problem, Position, and Block Order effects are

included in the SI (Table S1). |

3.2.2 Data analysis

Individual differences.

In Panel A of Figure 1, we plot the percentage of JDM questions answered

correctly (out of 12) by score on the CRT (0, 1, 2, 3). Average

performance was monotonically increasing in CRT, with a one-point increase

in the CRT associated with a 9.5 percentage point increase in accuracy.

All three of the marginal movements between CRT levels were statistically

significant (p < 0.001) in predicting greater average

accuracy across the 12 JDM questions. Across all conditions, participants

with a CRT score of 0 had an average accuracy of 42.0%, which rose to

70.4% for participants with a CRT score of 3. Given that the α of

the full 11 questions from our individual difference measures (CRT, CRT-2,

and BNT) was 0.77, we also plotted the average number of JDM questions

answered correctly by score out of the 11 pooled individual difference

questions (Figure S1 in the supplement).

We found that CRT, CRT-2 and BNT all explained unique variance in

performance in our battery of JDM questions. Whether we introduced each

individual difference measure by itself or included all three measures, we

found that all of the individual difference measures were statistically

significant positive predictors of success on our JDM questions (p

< 0.001; Models 2–5; Table 2). Based on this evidence,

we find positive support for Question 1 – higher scores on individual

difference measures including the CRT were associated with higher accuracy

on JDM questions.

| Table 3: Descriptive Statistics of JDM Question Performance and Log Time

Taken in the Between-Subjects Sample. (N = 1471.) |

| | | JDM questions correct |

Average log time | Exp (avg. log time |

Follow % |

Rulestotal |

|

Condition | Count | M | SD | M | 95% CI | M | M | M | SD |

| Control | 746 | 7.07 | 2.94 | 3.5 | [3.45,3.54] | 33.0 | 94.0 | 9.21 | 2.93 |

| Fast | 240 | 6.26 | 2.88 | 3.22 | [3.15,3.30] | 25.1 | 93.6 | 9.10 | 2.98 |

| Slow | 237 | 7.39 | 2.95 | 3.71 | [3.63,3.80] | 40.8 | 96.0 | 9.46 | 2.84 |

| Incentive | 248 | 7.27 | 2.82 | 3.56 | [3.48,3.64] | 35.2 | 95.9 | 9.00 | 2.90 |

| Note. JDM Questions Correct is the average number of JDM questions

answered correctly (out of 12). Average log time is the average of the log

time taken to provide a response to a JDM question within a condition. Exp

(avg. log time) is this average exponentiated. Follow % is the average

response participants’ provided to a self-report regarding the extent to

which they followed our experimental instructions, on a percentage scale

from 0 to 100. RulesTotal is the average number of

inferential rule questions answered correctly (out of 12).

|

Fast thinking, slow thinking, and control.

Manipulation check.

We performed two manipulation checks to test the efficacy of our

Fast versus Slow manipulations. First, in a separate

pilot, we tested whether our manipulations were effective in changing

performance in the bat-and-ball task (Frederick, 2005) in a

between-subjects design. This question was answered correctly by 29 of 83

(35%) participants in the Slow condition, compared to 23 of 106

(22%) participants in the Fast condition (Fisher’s exact

p = 0.050). The pilot also included a separate Fast-slow

within-subjects condition. Here, only 8 of 88 (9%) answered the question

correctly on their initial fast answer, whereas 28 (32%) answered it

correctly on their final slow answer (McNemar’s χ 2 = 38.2, p

< 0.001).2 These tests provide evidence that

our behavioral interventions can replicate previously observed effects in

improving performance in problems.

| Table 4: Logistic regressions predicting success with conditions coded

relative to Fast and with individual differences mean centered |

Model | 6 | 7 | 8 |

(Intercept) | -0.842*** | -0.867*** | -0.868*** |

| | (0.091) | (0.091) | (0.091) |

Control | 0.377*** | 0.350*** | 0.348*** |

| | (0.076) | (0.071) | (0.070) |

Slow | 0.532*** | 0.439*** | 0.437*** |

| | (0.094) | (0.088) | (0.087) |

Incentive | 0.498*** | 0.497*** | 0.500*** |

| | (0.091) | (0.083) | (0.083) |

CRT | 0.256*** | 0.117*** | 0.117*** |

| | (0.028) | (0.027) | (0.027) |

CRT2 | 0.201*** | 0.013 | 0.012 |

| | (0.029) | (0.027) | (0.027) |

BNT | 0.241*** | 0.177*** | 0.177*** |

| | (0.028) | (0.026) | (0.026) |

Education | -0.016 | 0.034 | 0.035 |

| | (0.024) | (0.021) | (0.021) |

Rulespecific | | 0.577*** | 0.452*** |

| | | (0.033) | (0.071) |

Rulenon−specific | | 0.185*** | 0.185*** |

| | | (0.011) | (0.011) |

Control X Rulespecific | | | 0.142† |

| | | | (0.082) |

Slow X Rulespecific | | | 0.138 |

| | | | (0.101) |

Incentive X Rulespecific | | | 0.188† |

| | | | (0.099) |

N | 17,652 | 17,652 | 17,652 |

| Note. *** p < 0.001, ** p < 0.01, * p

< 0.05, † p < 0.10. |

| The coefficient estimates for Measure,

Problem, Position, and Block Order effects are

included in the SI (Table S2). |

Our second manipulation check examined the average time taken on the JDM

questions across conditions in Stage 2 (Table 3). The average log time

taken on the JDM questions was significantly longer in the Slow

condition relative to both the Fast condition (p

< 0.001) and the control condition (p <

0.001). The average time taken in the control and Incentive

conditions were similar and landed between the Fast and

Slow conditions. We conclude that our Fast and Slow

manipulations had the intended effect on time spent answering our

questions.

Tests of Slow versus

Fast

Question 2 asked whether slow thinking would lead to more accurate

decisions than fast thinking. For this analysis, we tested dummy-coded

conditions against the Fast condition, which was the omitted

category. Model 6 of Table 4 shows that participants in the Slow

condition were significantly more accurate than those in the Fast

condition (b = 0.532, p < 0.001). Model 6

also shows that participants in the control condition were significantly

more accurate than participants in the Fast condition (b

= 0.377, p < 0.001). Participants in the Fast

condition had an average accuracy of 52.2%, relative to 58.9% in the

control condition and 61.6% in the Slow condition. In recent

meta-analytic work (Rand, 2019), researchers found that the difference

between intuitive and deliberative thinking conditions on the rate of

cooperation in economic games ranged from 1.6-3.1 percentage points. In

our context, we find a 9.4 percentage point difference between

Fast and Slow thinking conditions. This is a larger

effect.

| Table 5: Logistic regressions predicting success with conditions coded

relative to control and with individual differences mean centered. |

| Model | 9 | 10 | 11 | 12 | 13 |

| (Intercept) | -0.518*** | -0.520*** | -0.538*** | -0.529*** | -0.541*** |

| | (0.076) | (0.076) | (0.076) | (0.076) | (0.076) |

| Slow | 0.089 | 0.089 | 0.082 | 0.072 | 0.077 |

| | (0.071) | (0.071) | (0.071) | (0.072) | (0.071) |

| Fast | -0.350*** | -0.348*** | -0.341*** | -0.326*** | -0.338*** |

| | (0.071) | (0.070) | (0.072) | (0.072) | (0.072) |

| Incentive | 0.148* | 0.152* | 0.158* | 0.147* | 0.144* |

| | (0.065) | (0.066) | (0.066) | (0.067) | (0.065) |

| CRT | 0.117*** | 0.117*** | 0.171*** | | |

| | (0.027) | (0.027) | (0.023) | | |

| CRT-2 | 0.013 | 0.012 | | 0.111*** | |

| | (0.027) | (0.027) | | (0.024) | |

| BNT | 0.177*** | 0.177*** | | | 0.207*** |

| | (0.026) | (0.026) | | | (0.025) |

| Education | 0.034 | 0.035 | 0.046* | 0.065** | 0.039† |

| | (0.021) | (0.021) | (0.021) | (0.022) | (0.021) |

| Rulespecific | 0.577*** | 0.594*** | 0.621*** | 0.622*** | 0.643*** |

| | (0.033) | (0.045) | (0.034) | (0.033) | (0.034) |

| Rulenon−specific | 0.185*** | 0.185*** | 0.199*** | 0.212*** | 0.207*** |

| | (0.011) | (0.011) | (0.011) | (0.011) | (0.010) |

| Slow X Rulespecific | | -0.004 | | | |

| | | (0.085) | | | |

| Fast X Rulespecific | | -0.142† | | | |

| | | (0.082) | | | |

| Incentive X Rulespecific | | 0.046 | | | |

| | | (0.083) | | | |

| CRT X Rulespecific | | | 0.087*** | | |

| | | | (0.026) | | |

| CRT-2 X Rulespecific | | | | 0.064** | |

| | | | | (0.024) | |

| BNT X Rulespecific | | | | | 0.157*** |

| | | | | | (0.030) |

| N | 17,652 | 17,652 | 17,652 | 17,652 | 17,652 |

| Note. *** p < 0.001, ** p < 0.01, * p

< 0.05, † p < 0.10 |

| The coefficient estimates for Measure,

Problem, Position, and Block Order effects are

included in the SI (Table S9). |

A good deal of previous research has used an individual difference, CRT, to

demonstrate the benefits of fast thinking and slow thinking. Figure 1,

Panel A, shows similar benefits in these data. Our current design allows us

to assess the degree to which experimental interventions have a similar

impact to those shown using CRT. To examine this, we plotted the average

percentage of JDM questions answered correctly out of 12 by study condition

in Figure 1, Panel B. The difference in average percent correct between our

between-subjects Fast and Slow conditions (9.4%) was

approximately equal to the difference between each ascending pair of

averages grouped by CRT score (9.5%). In other words, the average

difference in performance between asking respondents to engage in fast

thinking and slow thinking was approximately equivalent to the average

difference in performance between two respondents whose CRT scores differ

by one point.

We note that even when engaging in slow thinking, many participants failed

to answer problems correctly; even when engaging in fast thinking, many

participants reached the correct answers. In our data many people reasoned

well intuitively, consistent with recent accounts of the “smart intuitor”

(Raoelison, Thompson & De Neys, 2020). For reference, across all five

conditions there were 23 participants who answered zero individual

difference questions correctly, who achieved an average accuracy across the

12 JDM questions of 29.7%. Relative to this level of performance (29.7%

accuracy) participants in the Fast condition were considerably

more successful (52.2% accuracy).

The strength of these effects varied to a modest degree across our six JDM

problems. The greatest difference between our Fast and

Slow conditions was observed for the denominator neglect

question; the smallest difference was seen for the default bias problem

(see Table S3 in the supplement for further details).

Tests of

Fast and Slow versus the control

In order to identify the specific effects of fast thinking and slow

thinking, we ran analyses using dummy-coded intervention variables with

the control group coded as the omitted category. In Table 2 we present

estimates of the effects of our different behavioral interventions.

We found a robust, negative effect of our Fast manipulation on the

likelihood of responding correctly in our JDM questions (p

< 0.001; Models 1-5). This suggests that the answer to

Question 3a is yes – encouraging fast thinking does hurt performance