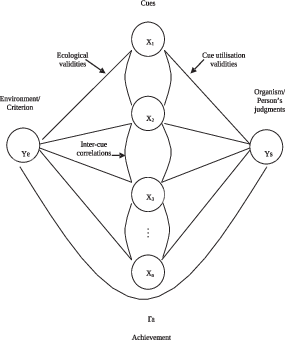

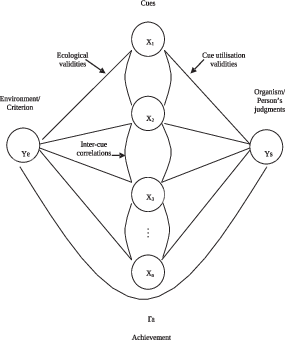

| Figure 1: The Lens Model (adapted from Brunswik, 1952). |

Judgment and Decision Making, Vol. 13, No. 1, January 2018, pp. 1-22

Kenneth R. Hammond’s contributions to the study of judgment and decision makingMandeep K. Dhami* Jeryl L. Mumpower# |

Kenneth R. Hammond (1917–2015) made several major contributions to the science of human judgment and decision making. As a student of Egon Brunswik, he kept Brunswik’s legacy alive – advancing his theory of probabilistic functionalism and championing his method of representative design. Hammond pioneered the use of Brunswik’s lens model as a framework for studying how individuals use information from the task environment to make clinical judgments, which was the precursor to much ‘policy capturing’ and ‘judgment analysis’ research. Hammond introduced the lens model equation to the study of judgment processes, and used this to measure the utility of different forms of feedback in multiple-cue probability learning. He extended the scope of analysis to contexts in which individuals interact with one another – introducing the interpersonal learning and interpersonal conflict paradigms. Hammond developed social judgment theory which provided a comprehensive quantitative approach for describing and improving judgment processes. He proposed cognitive continuum theory which states that quasi-rationality is an important middle-ground between intuition and analysis and that cognitive performance is dictated by the match between task properties and mode of cognition. Throughout his career, Hammond moved easily from basic laboratory work to applied settings, where he resolved policy disputes, and in doing so, he pointed to the dichotomy between theories of correspondence and coherence. In this paper, we present Hammond’s legacy to a new generation of judgment and decision making scholars.

Keywords: lens model, policy capturing, cognitive feedback,

interpersonal learning, interpersonal conflict, social judgment theory,

cognitive continuum theory

Kenneth R. Hammond [1917–2015] is perhaps one of the most important figures in the history of the psychology of human judgment and decision making. Although his influence has already been considerable, we anticipate that it will grow over time because his contributions build on a coherent and comprehensive theoretical framework, which lays a foundation for the development of a cumulative body of theory and research. It may be challenging, however, for future generations of scholars to access the breadth and depth of Hammond’s work, which spanned over seven decades, and is documented in over 100 articles, seven authored books, and five edited volumes. In this paper, our goal is to review and synthesize Hammond’s major contributions to the study of human judgment and decision making.

Hammond made at least eight major contributions that we will discuss. First, he virtually single-handedly kept Egon Brunswik’s legacy alive in psychology (Hammond, 1966; Hammond & Stewart, 2001b). Hammond built on Brunswik’s theory of probabilistic functionalism and his lens model, and he championed Brunswik’s method of representative design. Second, Hammond pioneered the use of Brunswik’s lens model as a framework for studying how individuals use information from the task environment to make clinical judgments (Hammond, 1955). This was the precursor to what is known today as ‘policy capturing’ and ‘judgment analysis’ research. Third, Hammond formulated the lens model equation which provided a quantitative tool for modeling and analyzing both the task environment side and human judgment side of the lens model for studying judgment processes (Hammond, Hursch & Todd, 1964; Hursch, Hammond & Hursch, 1964). Fourth, Hammond employed this equation when examining multiple-cue probability learning, making contributions regarding the effects of different forms of feedback on learning processes (Hammond & Summers, 1965; Todd & Hammond, 1965). Fifth, Hammond extended the application of the lens model to contexts in which individuals interacted with one another – introducing both the interpersonal learning paradigm (Hammond, Wilkins & Todd, 1966a) and the interpersonal conflict paradigm (Hammond, 1965; 1972; 1973; Hammond, Todd, Wilkins, & Mitchell, 1966b). Sixth, Hammond and his colleagues developed social judgment theory (SJT), which integrated prior work involving the lens model into a comprehensive quantitative approach for describing judgment processes and exploring means for improving cognitive performance (Hammond, Stewart, Brehmer & Steinmann, 1975). Seventh, grounded in, but going beyond Brunswikian concepts and SJT, Hammond developed cognitive continuum theory (Hammond, 1996a; 2000a; 2001a; Hammond, Hamm, Grassia & Pearson, 1987). He proposed that cognition moves along an intuitive-analytical continuum, with quasi-rationality as a middle-ground, and that cognitive tasks induce different modes of cognition, implying that cognitive performance is dictated by the match between properties of the task and mode of cognition. Finally, Hammond moved seemingly effortlessly from conducting basic work in the laboratory to solving applied policy-related problems (Hammond, 1996a; 2007; Hammond & Adelman, 1976). In doing so, he drew attention to the dichotomy between theories of correspondence, which focus on empirical reality, and theories of coherence, which focus on internal consistency, as well as the issues of separating facts from values and balancing type I and II errors in judgment under uncertainty. In the remainder of this paper, we discuss each of Hammond’s major contributions in more detail.

Hammond was key to keeping alive and advancing the legacy of the Austro-Hungarian psychologist Egon Brunswik who worked largely in the field of perception (1940, 1943, 1944, 1952, 1955c, 1955a, 1956; Tolman & Brunswik, 1935; for a review of Brunswik’s ideas, see Dhami, Hertwig & Hoffrage, 2004). Specifically, Hammond (1966) edited The Psychology of Egon Brunswik, which appeared just over a decade after Brunswik’s untimely death. This volume contained a eulogy by Edward Tolman; essays on Brunswik’s contributions to the science of psychology by such luminaries as Donald Campbell, Jane Loevinger, Fritz Heider, Lee Cronbach, and Roger Barker; four key papers by Brunswik himself; and a bibliography of Brunswik’s published works. Second, Hammond co-edited The Essential Brunswik (Hammond & Stewart, 2001b), which reprinted eighteen of Brunswik’s major papers accompanied by original commentaries, along with over two dozen papers discussing Brunswik’s theoretical and methodological contributions to psychology and describing applications of Brunswikian psychology to substantive problems. This volume also provided a complete annotated list of Brunswik’s publications. Third, Hammond established The Brunswik Society, with its associated annual international meeting and newsletter. This provided a setting for intellectual exchange among the next generation of neo-Brunswikian scholars.

In addition to his concrete efforts in archiving, preserving and curating Brunswik’s legacy, Hammond also built on Brunswik’s work. He was not deterred by the fact that Brunswik’s ideas were resisted and ignored by his contemporaries. Rather, Hammond vigorously championed Brunswik’s theory of probabilistic functionalism and lens model, as well as his method of representative design.

Brunswik (1943, 1952) believed that an organism functions to achieve a distal variable in its environment. This is done by using multiple, proximal cues that may be inter-correlated and are ultimately fallible. He argued that psychological processes are adapted to the probabilistic environments in which an organism functions. Brunswik (1957) saw the organism and environment as equal partners. Hammond and Stewart (2001a) suggest that the clearest statement of this fundamental thesis can be found in Brunswik’s (1957, p. 5) last paper, where he wrote:

….both organism and environment will have to be seen as systems, each with properties of their own, yet both hewn from basically the same block. Each has surface and depth, or overt and covert regions….the interrelationship between the two systems has the essential characteristic of a “coming-to-terms.” And this coming-to-terms is not merely a matter of mutual boundary or surface areas. It concerns equally as much, or perhaps more, the rapport between the central, covert layers of the two systems. It follow that, much as psychology must be concerned with the texture of the organism or of its nervous processes and must investigate them in depth, it must also be concerned with texture of the environment as it extends in depth away from the common boundary.

The theory of probabilistic functionalism had analytic and methodological corollaries. Brunswik (1944, 1955c, 1956) argued that in order to understand how the organism has adapted to its environment, researchers should employ the method of representative design ideally in the form of randomly sampling stimuli from a defined population to which the researcher wants to generalize. This contrasts from the commonly used systematic design (i.e., manipulation and control of selected variables) in psychology. In addition, Brunswik (1943) noted that in order to measure an individual’s degree of achievement, the researcher needs to perform data analysis at the idiographic level. This departs from nomothetic analysis, which is most commonly used in the law finding tradition.

Hammond (1966, p. 16) characterized Brunswik’s theory of probabilistic functionalism as “an organic whole — a history, comprehensive theory, and a methodology.” He took the lead in extending probabilistic function so that it applied to judgment and decision making processes as well as to perception, which was Brunswik’s original and primary focus. In the last few years of his life, Brunswik introduced the concepts of quasirationality (Brunswik, 1952) and ratiomorphic models of perception and thinking (Brunswik, 1955b; 1956, pp. 89–93). These nascent efforts were also advanced by Hammond, and four decades after Brunswik’s death, Hammond (2001a) chronicled his own success in applying the lens model to clinical judgment, conducting research on learning under uncertainty, developing a mathematical representation of the lens model, expanding this model to studying interpersonal learning and conflict, and developing cognitive continuum theory.1 We will discuss these contributions in more detail in subsequent sections of the paper. But, first, we will consider Hammond’s efforts to translate Brunswik’s method of representative design and his frustrated struggle to advocate Brunswik’s ideals for a scientific psychology.

As Hammond (2001b, 2001c) lamented, representative design is not well-understood in psychology (see also Dhami, 2011; Dhami et al., 2004; Gigerenzer, 2001). Hammond (2001b; p. 134) pointed out that it does not refer to:

…a demand for representation of the “real world” (a meaningless concept) but…to the extent to which the statistical properties of the laboratory task represent the statistical properties of the situation to which the results are to be generalized. In short, the same logic that is used to justify generalization over a subject population should be used to justify generalization over the task situation.

Hammond was tenacious in his promotion of representative design. It was a key element of his first paper (Hammond, 1948), a key element of his last (posthumous) paper (Hammond & Lang, 2017) and many papers in between (e.g., Hammond, 1996b). In particular, he clarified and further developed Brunswik’s ideas regarding representative design by distinguishing between substantive situational sampling and formal situational sampling (Hammond, 1966, 1972).

Formal situational sampling refers to the formal properties of the task (i.e., number of cues, their values, distributions, inter-correlations, and ecological validities), irrespective of content. The formal properties define the universe of stimulus (or situation) populations. For instance, cue number, values, and distributions range from 0 to infinity, and the ecological validities of cues and their inter-correlations range from -1 to 1. Any population of situations lies within these boundaries. A researcher who uses formal situational sampling can sample various combinations of formal properties. Formal situational sampling permits the construction and presentation of stimuli that are formally representative of the natural stimulus population. In order to discover what the formal properties of the task are, it is often necessary to conduct a task analysis prior to the study. This may involve interviews with those who are familiar or experienced with the task, observations of individuals performing the task, document analyses of past case records and a review of the extant literature on the task.

Figure 1: The Lens Model (adapted from Brunswik, 1952).

An example of the use of formal situational sampling can be found in Hammond et al.’s (1987) study of 21 expert highway engineers judging highway safety. The distal criterion was the rate of accidents divided by the number of miles travelled, averaged over 7 years, for each of 40 highways. The highways were described in terms of 10 cues (e.g., lane width) that highway safety experts identified as essential for judging road safety. The values, inter-correlations, distributions, and ecological validities of the eight cues were deduced from highway department records, and properties of two cues were measured by the experimenters from visual inspection of videotapes of each highway. The researchers were primarily interested in examining how presentation of the task affects the mode of cognition used (e.g., analytical versus intuitive), and so the cue information for each highway was presented via filmstrips, bar graphs, and formulas.

Despite Hammond’s proposal to use formal situational sampling, as Brehmer (1979, p. 198) argued, there is “no easy road to success”. The number of all possible combinations of variables may be extremely large, and the researcher needs to know which combinations should be studied. The problem of defining a reference class or sampling frame remains. In a review, Dhami et al. (2004) revealed that when trying to capture judgment processes, few researchers had used representative design either in terms of sampling real stimuli from the environment (for some exceptions, see Kirlic, 2006) or in terms of constructing stimuli to be formally representative of the environment. Hammond (1996b, p. 245) himself confessed that he had not always been faithful to the method of representative design and explained that this was because, like Brunswik, if he had done so, “I would become isolated and ostracized.”2

Hammond (1955) was the first to use the lens model outside the study of perception. He pioneered the use of Brunswik’s (1952) lens model (see Figure 1) in the study of clinical judgment, employing it as a framework for studying how individuals use information from the task environment to make clinical judgments. This work was the precursor to ‘policy capturing’/‘judgment analysis’ research mentioned earlier.

In his 1955 landmark paper, Hammond shifted attention away from the practice of solely studying the accuracy of clinical judgment to also explaining how clinicians achieve accuracy.3 This was a fundamental shift in focus because “it is suggested that the clinician not be considered a reader of instruments, but an instrument to be understood in terms of a probability model” (Hammond, 1955, p. 262). Hammond argued that the clinician and the patient are two different, but interacting systems that should be considered as a whole, and so research should examine the relations between a clinician and his or her environment (i.e., patients). He further pointed out that the clinician’s judgment process is often ‘quasi-rational’ and difficult to communicate because it is a result of the process of vicarious functioning. The term quasi-rational was first used by Brunswik (1952) to describe cognitive processes that were intermediate between intuitive and analytical poles, and Hammond elaborated this concept in other work (Hammond, 1996a; Hammond et al., 1987), which we will return to later.

Brunswik (1956, 1957) used the concept of vicarious functioning to describe how, in an uncertain environment in which no proximal cue is a perfectly valid and reliable indicator of the distal state, organisms learn to rely on multiple cues of partial but imperfect validity and reliability. Vicarious functioning is essential in clinical judgment because clients may present a set of symptoms that may change over time or may present symptoms different from those presented by another client who is suffering the same problem. Hammond also argued that a clinician’s capacity for dealing with the intersubstitutability of cues, over encounters with a series of patients, should be studied using representative design.

Hammond’s points are illustrated in two studies conducted by his students (Herring, 1954; Todd, 1954, see also Hammond, 1955). In the first study, Todd (1954) asked 10 clinicians to judge the intelligence (as measured by an IQ test) of 78 patients using a Rorschach test. The IQ test score was the objective outcome criterion. Achievement was measured in terms of the correlation between the clinician’s judgments made over a set of clients and their IQ test scores. Emulating Brunswik’s (1940) approach, correlational statistics were used to capture the relationships between cues and judgments (cue utilization), cues and the environmental criterion (ecological validity), as well as inter-cue correlations.

Hammond noted that the clinicians’ performance improved when they had access to more information. The median correlation between judgments and IQ scores rose from 0.47 to 0.64 when clinicians had access to the verbal protocol data from the Rorschach. Then, using the four most valid cues as predictors, a multiple linear regression equation was computed for the environment (i.e., capturing the relations between the patients’ IQ scores and the four Rorschach cues) and separate equations were computed for each clinician (i.e., capturing the relations between each clinician’s judgments of IQ and the four Rorschach cues). Each model revealed the relative weights attached to the cues. The model of the environment was then compared to each clinician’s model.4 The match between the two models explained how the clinicians attained their level of achievement. The multiple R for the model of the environment was 0.479 and the median correlation between the clinicians’ judgments of IQ and the IQ test score was 0.470. The ecological validities of the four cues in the environment, each clinician’s utilization validities of the four cues, the inter-correlations among the four cues in the environment and as used by each clinician, were also elicited. There were variations among the clinicians in terms of the cues they used. “Certain clinicians were found to be using invalid cues, others neglected the valid ones” (Hammond, 1955, p. 261).

In his study of clinical judgment, Hammond also demonstrated that the multiple linear regression model could predict human (clinical) judgment. A model was developed for each clinician on 39 patients and cross-validated on a further 39 patients (i.e., it made predictions of each clinician’s judgments on a set of new cases). The median correlation between the models’ predictions and the clinicians’ judgments on the new cases was 0.85. Hammond (1955, p. 261) concluded that “evidently the multiple correlation model which predicts that the clinician combines the data from the Rorschach in a linear, additive fashion is a good one – it predicts quite successfully in comparison with most psychological efforts.”

In the second study, Herring (1954) asked clinicians to judge patients’ responses to surgical anesthesia using their psychological test results. No objective outcome criterion was available and so analysis of the environment side of the lens model and consequently achievement was not possible. Instead, Hammond demonstrated how the correspondence or agreement between the judgments of two clinicians (a medic and a psychologist) could be studied by examining the match between their regression models. Brunswik (1956, p. 30) had also noted that correlations could be used to measure “agreement among judges.”

In his first successful application of the regression model to clinical judgment, Hammond (1955, p. 261) was cautious in noting that the analyses merely demonstrated that it was possible to construct “some probability model” that captured the essential elements of the lens model. Over time, however, the similarity between the multiple linear regression model of the environment and of the clinician’s judgments led Hammond et al. (1964, p. 444) to conclude that “The clinicians’ inferential processes were nearly identical with the multiple-regression procedure both in function and in content.” When proposing further studies, they stated that “We are confident that … such studies will find small differences between the cognitive processes of the clinician, or any human subject, and the multiple-regression equation” (p. 452). Later, however, Hammond (1996b, pp. 244–245) confessed that: “a […] sin of commission on my part was to overemphasise the role of the multiple regression (MR) technique as a model for organising information from multiple fallible indicators into a judgment. There is nothing within the framework of the Lens Model that demands that MR be the one and only model of that organising process.” Researchers have now successfully developed and tested non-statistical alternatives to the regression model within the lens model context (e.g., Dhami & Ayton, 2001; Dhami & Harries, 2001; Gigerenzer & Goldstein, 1996).

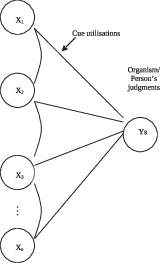

Hammond’s ground-breaking 1955 paper presaged two related, but distinguishable threads of work. In one thread, exemplified by the Todd study, the focus is on modelling both the human judgment and environment side of the lens model, as well as the interaction between them, consistent with the fundamental tenets of Brunswik’s probabilistic functionalism. In the other thread, reflected by the Herring study, the emphasis on the environmental system recedes (because it is unknowable, unavailable or not of interest), and the spotlight is on how individual judges weight and combine information to make judgments. This latter situation represents what has been called the single-system design (Cooksey, 1996; Hammond, 2001a) of the lens model (see Figure 2) and is associated with the policy-capturing/judgment-analysis approach (Brehmer & Brehmer, 1988; Stewart, 1988), which we discuss in more detail below.

In the single-systems design there is no outcome criterion, or at least none that is immediately available, so researchers simply describe an individual’s judgment policy. As Cooksey (1996) points out, the single-system design is not truly Brunswikian because when the environment is unknown or unmeasurable, it does not allow for an examination of the interrelationships between the judge’s cognitive system and the task system. Nonetheless, Hammond (2001a) noted that the single-system design is the most widely used version of the lens model. Dhami et al. (2004) also observed that much of the subsequent research within SJT, developed by Hammond and his colleagues, which we discuss later, uses this design.

Figure 2: Lens Model for single-systems design.

The single-system design may be appropriate because an outcome criterion is unavailable, for a variety of valid reasons, as enumerated by Cooksey (1996). First, an outcome criterion may not be useful or appropriate because no definitive, correct answer is available or ascertainable. In order to overcome problems in obtaining an outcome criterion, some studies have used expert judgments to provide proxies for environmental criterion measures (e.g., Adelman & Mumpower, 1979; Hammond & Adelman, 1976; Mumpower & Adelman, 1980). Second, an outcome criterion may be difficult to obtain due to concerns with ethics or legality. Third, an outcome criterion may be unavailable during the study period. Fourth, studies using hypothetical cases or cases that represent future situations, by their very nature preclude the use of an outcome criterion. Finally, an outcome criterion may not be included because it is irrelevant to the research goal since researchers wish solely to study agreement.

As mentioned above, analyses of how judges weight and combine information to make judgments in the single-system design have come to be known to as policy capturing and judgment analysis both within and outside5 the Brunswikian and SJT traditions. A large body of research has emerged, and in Brehmer and Joyce’s (1988) edited volume called Human Judgment: The SJT View that summarizes advances since Hammond et al.’s (1975) introduction of SJT, there is both a chapter on judgment analysis (Stewart, 1988) and a chapter on policy capturing (Brehmer & Brehmer, 1988). The former chapter acknowledges that the topic of that chapter is sometimes also called ‘policy capturing’ and the latter chapter similarly acknowledges that the focus of the chapter is sometimes referred to as ‘judgment analysis’.6

Judgment analysis and policy capturing have been fully explicated by a number of scholars (Brehmer & Brehmer, 1988; Cooksey, 1996; Hammond et al., 1975; Stewart, 1988). A study by Dhami and Ayton (2001) provides an example of the primary features of this approach. They studied British judges’ bail decisions. Through a postal survey, 81 judges were presented with a set of 41 hypothetical cases. These were described in terms of nine cues (e.g., seriousness of offence, community ties). The cues were identified following a review of the literature on bail, legal guidelines and training, interviews with judges, and observations of bail hearings. Each cue itself varied (e.g., the strength of community ties cue was dichotomous and described as strong or weak). Other cues (e.g., length of adjournment) were held constant and provided background information to all the cases. The judges were first asked to make a decision on each case (i.e., bail unconditionally, bail with conditions, and remand in custody). They were then asked to rate how confident they were in their decision. After making their decisions, the judges were asked to rank the cues in order of the importance attached to them when making their decisions. Seven of the 41 cases were duplicates used to measure test-retest consistency in decisions, and seven were holdouts used for model validation. Finally, studies interested in achievement would also document the outcome (or criterion) for each case, although this was not possible for Dhami and Ayton (2001).

Once the judgment data has been collected, judgment policies are captured for each individual.7 Traditionally, policies are captured using multiple linear regression statistics (Cooksey, 1996; Hammond et al., 1975; Stewart, 1988). The criterion (dependent) variable is the judgment and the cues are the predictor (independent) variables. An individual’s judgments are regressed on the cues. This procedure yields a weighted linear model that can be cross-validated, and which describes an individual’s judgment policy in terms of statistically significant cues in the model, relative cue weights, the form of the function relating the cues to the judgments (e.g., linear), the rule used to integrate the cues into a judgment (i.e., additive), and an individual’s predictability as measured by the model (i.e., R2). Inter-individual differences (or agreement) in decisions and policies may then be examined. Participants’ ‘insight’ into their decision making may also be examined by comparing their self-reported policies with their model. Finally, intra-individual inconsistency in making decisions may be studied by comparing the decisions made in the test-retest situations.

Although there are exceptions, as Brehmer and Brehmer (1988) point out, reviews of judgment analysis and policy capturing research have generally concluded that the studies have yielded consistent findings, irrespective of the number and type of decision makers sampled, and the nature and content of the judgment tasks involved (Brehmer, 1994; Brehmer & Brehmer, 1988; Camerer, 1981; Cooksey, 1996; Libby & Lewis, 1982; Hammond et al., 1975; Karelaia & Hogarth, 2008; Slovic & Lichtenstein, 1971).8 Studies have typically found that the multiple linear regression model is a descriptively valid model as it provides an adequate fit to individuals’ judgment data. Researchers have suggested that a high R2 implies that judgments are the result of a linear additive process (Hammond et al., 1964. However, as we pointed out earlier, Hammond recognized the paramorphic nature of the regression model, and other researchers have since successfully captured human judgment policies using non-statistical and even non-compensatory models (e.g., Dhami & Ayton, 2001; Dhami & Harries, 2001, 2010). Gerd Gigerenzer and his colleagues initiated a program of research modeling the task environment, in which they demonstrate the descriptive and predictive utility of simple (non-statistical and sometimes non-compensatory) models, called ‘fast and frugal’ heuristics (e.g., Gigerenzer, Todd & the ABC Research Group, 1999; see also Gigerenzer, Hertwig & Pachur, 2011).

The regression model indicates the number of cues used by an individual (i.e., those that have statistically significant beta weights), the relative weights of the cues (i.e., beta weights) and the direction in which cues were used (i.e., the sign of the beta weight). It has typically been found that regression models contain on average three cues (Brehmer, 1994; Slovic & Lichtenstein, 1971). Most studies have reported that participants do not use all of the cues available. Studies have often found that a few cues are weighted more heavily than the others. These findings on cue use are compatible with the more recent research demonstrating the descriptive validity of non-compensatory models that imply judgments may be based on only one cue (e.g., Dhami & Ayton, 2001; Dhami & Harries, 2001; Gigerenzer & Goldstein, 1996).

Hammond et al. (1975) proposed that consistency should be measured in terms of the variance of judgments made in a test-retest situation. Studies using such measures of consistency have often correlated the two sets of decisions, although other indices of agreement may also be used (e.g., Gillis, Lipkin & Moran, 1981). It has generally been found that correlations are moderate for the majority of participants in a study. Note that inconsistency implies some degree of judgment inaccuracy.

Researchers have also compared the captured policies with individuals’ stated policies, as elicited by various direct report methods.9 The subjective cue weights may be compared with statistical weights derived from the regression model, the fit of models containing each set of weights may be compared, or the predictions made by the two sets of weights may be compared (Reilly & Doherty, 1992). According to some, such comparisons provide a measure of an individual’s insight into his or her judgment policy (e.g., Ullman & Doherty, 1984). Todd (1954; see also Hammond, 1955 and Summers, Taliaferro & Fletcher, 1970) had found that his participants were not able to accurately articulate the weights they attached to the cues. However, acknowledging that direct methods may provide an unreliable and invalid method for demonstrating self-insight because of the difficulties in introspection and articulating policies, Reilly and Doherty (1989, 1992) proposed an alternative method, i.e., policy recognition. Here, participants are asked to identify their own policy, defined in terms of cue weights, from a set of other policies. This method indicates a greater degree of insight, as participants were quite successful in recognizing their own policies.

Hammond and his colleagues introduced the lens model equation (Hammond et al., 1964; Hursch et al., 1964) to the study of judgment processes. This equation provided the requisite quantitative tool for modeling and analyzing both the environment side and the human judgment side of Brunswik’s lens model. The lens model equation is the analytic complement of the conceptual framework articulated in the lens model. The original formulation by Hursch et al. (1964) was simplified by Tucker (1964), whose version is shown in Equation 1 (see also Karelaia & Hogarth, 2008).

| ra = G Re Rs + C | √ |

| √ |

| (1) |

Here, ra represents achievement, and is measured by the correlation between the judgments and the criterion.

Re represents the predictability of the environment and thus the upper limit of achievement. It is measured by the linear multiple correlation between the cues and the criterion.

Rs represents an individual’s ability to utilize his or her knowledge of the task in a consistent manner, and is measured by the linear multiple correlation between the cues and the judgments.

G represents the match of the linear components of the two models, namely the model of the environment and of the individual. It is measured by the correlation between the linearly predictable variance in the environment and the linearly predictable variance in the individual’s judgments.

C represents the non-linear component of achievement, and is measured by the correlation between the residuals from the linear regressions of the environment and the individual.

Each of the major statistical terms of the lens model equation can be directly translated into the conceptual framework of Brunswik’s lens model itself. Achievement (ra) is determined by an individual’s ability to detect and utilize both linear and non-linear patterns in the environment. Stewart (1988) notes that in most judgment tasks, the non-linear component is so small as to be negligible. If the non-linear component is large, it indicates that the multiple linear regression model is not capturing all of the consistent variation in judgment.

Task predictability (Re) is measured in terms of the linear multiple correlation between the cues and the criterion. Perfect achievement is not possible when Re is less than 1. It is not possible to be always correct in a world that is not perfectly consistent. Task predictability thus sets an upper mathematical bound on achievement; ra ≤ Re. Brehmer (1970, 1972, 1973b) demonstrated that not only does Re set an upper bound on ra, but that lower levels of task predictability tend to induce sub-optimal levels of achievement. This happens because less predictable tasks tend to elicit lower levels of cognitive control, Rs. Brehmer (1976) suggests that judges become less consistent as they modify their judgment policies in order to try to achieve higher levels of achievement, but due to task uncertainty they receive noisy feedback, making it difficult to assess whether changes in judgment policy have led to improved performance. As summarized by Stewart, Roebber, and Bosart (1997, p. 206), “Not only does task predictability place an upper bound on potential accuracy …, but a number of studies have found evidence that the reliability of judgment is lower for less predictable tasks …Judges respond to unpredictable tasks by behaving less predictably themselves.”

Cognitive control (Rs) refers to the relationship between an individual’s judgments and environmental cues. It is measured in terms of the linear multiple correlation between cues and judgments. Building on earlier work, Hammond and Summers (1972) proposed that performance in cognitive tasks involves two distinct processes: acquisition of knowledge and cognitive control over knowledge already acquired. Just as task predictability places an upper bound on achievement, so does cognitive control. Cognitive control sets an upper mathematical bound on achievement; ra ≤ Rs. In subsequent work, Hammond and colleagues (Hammond et al., 1975) distinguished between cognitive control and consistency. Cognitive control refers to the similarity between an individual’s judgments and predictions of the best-fitting model of that individual. Consistency refers to the similarity between repeated judgments made on identical cases. Consistency places an upper bound on cognitive control.

Knowledge (G) is assessed in terms of the correlation between the best fitting model of the human system and the best fitting model of the environmental system. It represents the achievement that would be attained if both the model of the judge and the task were executed with perfect control. In general, G will be maximized when the judge’s cue utilizations (weights and function forms) parallel the ecological validities of the environmental cues, presuming that the judge uses the same organizing principle to combine the information into an overall judgment as is found in the environmental system. As Karelaia and Hogarth (2008) note, however, it is possible for judges to obtain high levels of G, even when the cue utilizations do not precisely match the ecological validities. This can happen when inter-cue correlations are high.

Finally, C is also a measure of knowledge. It represents the consistent non-linear variation shared between the individual and environment that is not captured by the regression models.

Cooksey (1996, p. 165, words in square brackets added) sums up the situation nicely:

The LME [lens model equation] is an elegant, precise mathematical formulation of a simple truth. That is, a person’s ability to make correct judgments about reality is a function of three things: (1) how predictable the world is (Re), (2) how well the person knows the world (G and C), and (3) how consistently the person can apply his or her knowledge (Rs).

In the first application of the lens model equation, Hammond et al. (1964) reanalyzed data from a study by Grebstein (1963), who compared naïve, semi-sophisticated and sophisticated clinicians’ predictions of 30 patients’ IQ test scores using 10 cues from patients’ Rorschach tests. Grebstein had concluded that performance did not increase with experience and that there was room for improvement. Hammond et al. (1964) used the lens model equation to determine the upper limit of achievement for this task and found that Re was 0.79. They also demonstrated that the three groups of clinicians did not differ in terms of Rs, or C as all groups were highly linear. The three groups did, however, differ in terms of G because clinicians in the more sophisticated groups exhibited a greater match between the ecological validities of the cues and their utilization validities.

Once individuals’ judgment policies have been captured, they can be compared with the model of the task, in order to examine individual achievement. As mentioned, the upper limit of achievement is dependent upon that afforded by the task. Libby and Lewis (1982) concluded that studies in accounting (in particular prediction of business failure and prediction of security return) reported high levels of achievement. Overall, studies comparing judgments against an outcome criterion have demonstrated fairly high levels of achievement or judgmental accuracy as measured by the lens model equation; ra averaged 0.56 among the 249 studies in a meta-analytic review by Karelaia and Hogarth (2008).

Since the first studies were conducted over 50 years ago, an extensive body of research has used or referred to the lens model equation. The precise number of studies is difficult to estimate accurately, but efforts to compile bibliographies (Holzworth, 1999) and to conduct meta-analyses (Karelaia & Hogarth, 2008) provide evidence that there are hundreds of such studies, at a minimum. Methodologically, Castellan (1973, 1992), Cooksey and Freebody (1985), Stewart (1976, 1988, 1990) and Stewart and Lusk (1994), among others, have made important modifications, suggested significant extensions, or examined the statistical and empirical behavior of parameters of the lens model equation.

By extending Brunswik’s lens model from the study of perception to judgment, and then formulating the lens model equation, Hammond opened up the possibility of new research approaches and agendas. These tools could be used to study how individuals learn to make judgments in probabilistic multiple-cue learning situations. We discuss this topic in the next session. Second, these tools offered the opportunity to study inter-individual (dis)agreement in judgment, interpersonal learning, and interpersonal conflict. This topic is discussed in the subsequent section.

The lens model equation enabled Hammond to undertake a program of research on multiple-cue probability learning in which he and others made numerous contributions. Holzworth (1999) provides a good overview of these efforts:

Cue probability learning involves an organism attempting to achieve (learn) a relationship with some distal criterion variable by attending to one or more multiple fallible indicators (differentially valid cues). Smedslund (1955) conducted the first multiple and single cue probability learning study after Brunswik (Brunswik & Herma, 1951), but it was Hammond and his students in the United States (Hammond, Hursch & Todd, 1964; Hursch, Hammond & Hursch, 1964), and Björkman (1965) and his student Brehmer (1972) in Sweden who initiated extensive programs of research. During a typical cue probability learning experiment a person makes judgments based on some number of probabilistic cues over a series of trials. The object is to correctly predict the quantitative or categorical criterion value on each trial. Cues differ in terms of their relevance (ecological validity) to the criterion. Trial by trial (outcome) feedback may be given on each trial, and/or cognitive feedback may be given after subsets of trials. Cognitive feedback concerns characteristics of the person’s cognitive processes as well as characteristics of the task ecology.

In one of the earliest multiple-cue probability learning studies, Hammond and Summers (1965) gave three groups different amounts of information about the task in addition to different amounts/forms of outcome feedback (i.e., no information, information that the task contained linear and non-linear cue-criterion function forms, and information that in addition identified the linear and non-linear cues). They asked individuals to predict a criterion value from two cues, one linearly related to the criterion and one non-linearly related. All groups showed learning over five blocks of 20 trials and all groups learned to use the linear cue more efficiently than the non-linear cue. However, individuals in the group given the most information showed a greater degree of achievement and were more likely to learn to use the non-linear cue. The two groups who were provided information about the task were also better at learning the ecological validities of the cues.

Holzworth’s 1999 annotated bibliography identified 315 studies of single- and multiple-cue probability learning conducted up to that date. Several important empirical regularities have emerged from the literature. For instance, people can learn positive cue-criterion relations faster than negative ones; they can slowly learn to track changes in relative cue weights over time; they can learn to use cues faster than learning function forms; and they typically do not use cue redundancies effectively (Klayman, 1988; Slovic & Lichtenstein, 1971). Generally speaking, the findings from the multiple-cue probability learning paradigm indicate that individuals can learn about the formal properties of the task such as cue validities and linearity of cues and adapt to them, although learning tends to be better and faster when task predictability (Re) is higher (Brehmer, 1973a; 1974). It has also been shown that people find it more difficult to learn non-linear cue-criterion relations than linear ones and they experience difficulty in applying knowledge about non-linear relations consistently (Deane, Hammond & Summers, 1972).

Hammond initiated a line of research within the multiple-cue probability learning paradigm that proved to be of particular consequence. This concerned the distinction between, and differential effects of, outcome versus cognitive feedback. It was clear from Hammond and Summers’ (1965) study that providing information about properties of the task in addition to traditional outcome feedback improved learning. Thus, Todd and Hammond (1965) developed the procedure of cognitive feedback (sometimes also known as lens model feedback).10 This involves providing information about the formal properties of the task, the individual’s judgment policy (i.e., utilization validities, Rs, consistency and cue-judgment function forms), and the match between properties of the environment and the individual’s judgment policy (i.e., achievement, G and C; Balzer, Doherty & O’Connor, 1989; Doherty & Balzer, 1988).

Todd and Hammond (1965) provided participants with feedback on their level of achievement, cue utilization validities and the ecological validities of the cues, for each of eight blocks of 25 trials. They found that cognitive feedback led to significantly greater achievement than did outcome feedback,11 and concluded that cognitive feedback enables people to compare their understanding of the task and discover where they were using cues inappropriately. This study was followed by a series of additional ones which revealed that participants given only outcome feedback learned less rapidly than those given cognitive feedback (Deane et al., 1972; Hammond, 1971; Hammond & Boyle, 1971; Hammond, Summers & Deane, 1973).

Other research has found that providing both cognitive feedback in the context of outcome feedback may actually impair performance (Holzworth & Doherty, 1976); that learning is slow and difficult with outcome feedback alone (Brehmer, 1980; Klayman, 1988);12 and that cognitive feedback is superior to no feedback at all (Doherty & Balzer, 1988). Unlike cognitive feedback, in stable environments, outcome feedback does not provide information useful for making future judgments.

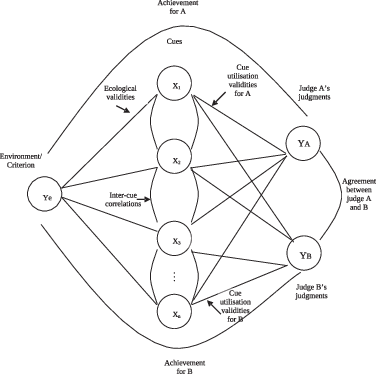

Figure 3: Lens model for study of interpersonal learning and interpersonal conflict (adapted from Hammond [1965] and Hammond et al. [1966b]).

The usefulness of cognitive feedback, however, may need to be buttressed by other information. Balzer et al. (1989) concluded that providing people with information about the characteristics of their own judgment performance but not about the appropriateness of that performance does little to improve performance compared to providing information about the characteristics of the task. More recently, Karelaia and Hogarth (2008) concluded that information about the task was more useful than feedback about the judgment policy alone, or simply outcome feedback. As Brehmer (1979) points out, in real world conditions feedback is not always available and people are not consciously trying to learn the task. In fact, analyzing feedback requires cognitive resources, which may be lacking in complex tasks (Harvey, 2011).

Among Hammond’s most significant contributions was his extension of the lens model to social contexts in which individuals learn from or about one another, or are in judgment conflict with one another. Brunswik’s probabilistic functionalism is based on the double-system lens case, where the two focal systems are the individual and the environment. This asocial focus is unsurprising given that Brunswik was primarily concerned with perception. For Brunswik (1956, p. 35), the study of agreement was the only time when more than one individual was “brought into the picture.”

In an innovative and revolutionary development, Hammond moved the lens model into a social context, introducing both the interpersonal learning (IPL) paradigm (Hammond, 1972; Hammond et al., 1966a; see also Earle, 1973) and interpersonal conflict (IPC) paradigm (Hammond, 1965, 1973; Hammond et al., 1966b). The triple-system lens model for the IPL and IPC paradigms is shown in Figure 3. In Hammond’s (2001a, p. 471) own view, the triple-system case represented “perhaps the most important expansion of Brunswik’s lens model. It is possibly one that Brunswik did not anticipate.”

In both the IPL and IPC paradigms, the judges face a common task, where there is an environmental criterion with which multiple, proximal cues are imperfectly associated. Judges attempt to infer the value of the criterion on the basis of available cues. The IPL and IPC paradigms share much in common. The main differences lie in the specific elements of the triple-system case where attention is focused. In the IPL paradigm, the focus may be either on learning about or learning from the other person, whereas in the IPC paradigm, the focus is on reducing disagreement with the other person. We present these two paradigms below.

Interpersonal learning about the other person is focused on understanding the other person and developing the ability to predict how he or she will respond to the task. The ability to predict accurately the other person’s judgments requires an understanding of the weights, function forms, and organizing principle that the other person uses to combine information from cues into a judgment. Prediction accuracy is also constrained by the degree of cognitive control the other person exercises in making judgments. The other person’s Rs plays the same role as Re does in the two-system case, constraining the upper limit of achievement.

In one of the original interpersonal learning studies, Hammond et al. (1966b) found that, on average, paired participants were able to predict one another’s responses quite well, as well as one another’s differential cue weights, linear (although not to the extent hypothesized), and non-linear cue use. Participants were also more likely to learn about the other person if that person was more reliable, and through interaction each pair’s policy similarity increased.

Some research on interpersonal learning about the other person has focused on the ability to predict another’s judgments when there is no common environmental criterion, for instance, in negotiation, when the relevant judgments are about the desirability of potential settlements (Balke, Hammond & Meyer, 1973; Miller, 1973; Mumpower, Sheffield, Darling & Milter, 2004).

Research on interpersonal learning from the other person focuses on understanding how a judge learns about a task by observing the judgments that another person makes. Clearly, interpersonal learning from the other person is not independent of interpersonal learning about the other person — to learn about the task from another person, one needs to learn how the other person interacts with the task. The other person must convey relevant information about the task for interpersonal learning to be better than individual-only task learning.

Hammond (1972) found that function forms affected cue utilization validities where, for instance, after interpersonal learning, individuals trained to use a non-linear cue could give up reliance on that cue and learn to use a linear cue faster than individuals trained to use a linear cue. In a set of three experiments, Earle (1973) reported that participants taught to rely solely on linear cues required interpersonal learning from participants using non-linear cues in order to switch to using non-linear ones, but not vice versa. Interpersonal learning was also necessary for learning negative linear or non-linear rules.

Research has also investigated the effects of task characteristics on interpersonal learning and found the effects to be similar to those found in the multiple-cue probability learning paradigm, with the exception that non-linear policies are easier to learn through interpersonal learning (Hammond et al., 1975). Cognitive feedback has also been shown to be useful in interpersonal learning tasks (Balke et al., 1973; Miller, 1973).

Judges may find themselves in false agreement, impeding interpersonal learning, when they reach similar judgments despite different polices because of high levels of inter-cue correlations (Mumpower & Hammond, 1974). On the other hand, lack of cognitive control means that parties may disagree in practice even though they agree in principle, referred to as false disagreement (Dhami & Olsson, 2008). These latter observations lead us to describe Hammond’s IPC paradigm.

Research in the IPC paradigm is motivated by Hammond’s observation (1965, 1973; Hammond et al., 1966b) that not all conflict and disagreement stem from differences in incentives or motivations. Cognitive conflict may arise due to differences in what people “believe to be the efficient, just, and moral ways to solve their problems” (Hammond, 1973, p. 189). As Hammond and Grassia (1985, p. 233) put it “People dispute many things besides differential gain, or who gets what. They also disagree about (a) the facts (what is, what was), (b) the future (what will be), (c) values (what ought to be), and (d) action (what to do).”

As the triple-system lens model in Figure 3 illustrates, there are four primary potential sources of cognitive conflict. Judges may disagree about the relative importance (i.e., relative weights) of the proximal cues; they may disagree about the relationship between values of the cues and values of the criterion (i.e., the appropriate function forms for cues); they may disagree about the appropriate rule for combining information from cues into a judgment (i.e., the organizing principle); and they may disagree because one or both judges are unable to make judgments in a consistent way (i.e., imperfect cognitive control).

In a standard interpersonal conflict experiment (Brehmer, 1976; Cooksey, 1996; Hammond, 1965; 1973), participants may be selected either because they already have conflicting judgment policies or they may be taught to develop conflicting policies. Unaware that they have different policies, participants are brought together and asked to co-operate on solving a set of problems where cues are probabilistically related to the criterion. On each trial, they consider the available information and make judgments about the criterion variable individually and then communicate these to one another. If they disagree they must discuss the problem until they reach an acceptable joint response. They are then asked to reconsider their original decisions, and these revisions remain private. Finally, they are presented with the correct solution. Conflict or agreement is therefore defined objectively as the actual differences in the judgments made by the two individuals.

Conflict may be due to systematic and non-systematic cognitive differences in the way people perform the task. Systematic differences refer to features of judgment policies such as the relative cue weights and non-systematic differences refer to the idea that people may exercise imperfect cognitive control in the application of their policies (Mumpower & Stewart, 1996). Research has shown that, over a series of trials, participants unlearned their conflicting policies and developed similar ones, however, conflict persisted because individuals simultaneously became more inconsistent in applying their revised policies. Such non-systematic differences accounted for more conflict than did systematic differences in judgment policies (Brehmer, 1976). The aforementioned findings have been replicated using different types of participants and task conditions (Hammond & Brehmer, 1973).

Brehmer’s (1976) research demonstrated how the degree and nature of conflict (i.e., whether it is due to systematic or non-systematic differences) is affected by task conditions. For example, he showed that policy consistency is lower in less predictable tasks leading to less agreement; that non-linear cues lead to lower consistency but do not affect policy similarity; that when the task contains linear cues and people only have to use one cue they are more consistent but their policy similarity is unaffected; that when the task contains both linear and non-linear cues and people only have to use one cue their policy similarity and consistency is higher; and that inter-cue correlations lead to less policy similarity.

Hammond and Brehmer (1973) applied the technique of cognitive feedback and developed a cognitive aid to conflict resolution called POLICY (originally called COGNOGRAPH). This was an interactive computer program that enabled people to express their policies, compare them, change them, and discover the effects of such changes on cognitive conflict. The emphasis is on teaching consistent new policies. It has been found that cognitive feedback helps to speed conflict reduction (Balke et al., 1973).

In sum, research in the IPC paradigm has demonstrated that agreement could be studied in the same way as achievement. Although the study of two cognitive systems has become popular, and despite the potential for theoretical and methodological advance of the concept of cognitive conflict, Dhami and Olsson’s (2008) review reveals that research on cognitive conflict using the lens model has declined sharply. It has been replaced by research on what is called ‘task conflict’ conducted by scholars interested in group conflict. Unfortunately, the latter has less theoretical precision and methodological rigor than Hammond’s IPC paradigm.

In 1975, after two decades of work building on Brunswik’s ideas and advancing them in the study of clinical judgment, multiple-cue probability learning, interpersonal learning and interpersonal conflict, Hammond and his colleagues (Hammond et al., 1975) synthesized the key elements of this work into a unified framework, which they called social judgment theory (SJT). SJT is not a theory providing testable hypotheses about the nature of human judgment, but a meta-theory that provides a framework to guide research in this endeavor.

The basic concepts of SJT are all foreshadowed by the two decades of work that preceded it, and so we shall not discuss them in detail here. Suffice it to say that in SJT equal status is accorded to both the human and environment, there are parallel concepts depicting each side of the lens, and the distinction between surface (proximal cues) and depth (the environmental criterion and judgment about the environmental criterion) is essential.

Social judgment theorists, as they have come to be known, study “life relevant” issues (Hammond et al., 1975, p. 276), and so much of SJT research is applied. There are four basic goals of SJT research: (a) to analyze judgment tasks and processes; (b) to analyze the structure of achievement and agreement; (c) to understand how humans learn to achieve and agree; and (d) to find methods for improving achievement and agreement (Brehmer & Joyce, 1988; Hammond et al., 1975). SJT research, thus, aims to describe behavior before prescribing changes to improve it. The model of the environment serves as a benchmark, indicating how judgment can be improved (Brehmer & Joyce, 1988; Hammond et al., 1964). Cognitive performance may be enhanced by cognitive feedback and cognitive (decision) aids (Hammond et al., 1975).

Several types of judgment situations are distinguished in SJT research. These are the double-systems design, single-system design, triple-systems design, the N-systems design and the hierarchical design (see also Hammond, 1972; Hammond et al., 1975). The first refers to Brunswik’s (1952) original lens model as shown in Figure 1, and involves an analysis of the interaction between an individual and a task. As mentioned earlier, this framework is used to study achievement and multiple-cue probability learning.

The other judgment situations represent modifications to the original lens model. As mentioned earlier, the single-system design refers simply to an individual’s judgment policy, with no reference to achievement in the task (see Figure 2). The triple-systems design involves one task and two individuals (see Figure 3). It is used to study interpersonal learning and interpersonal conflict. The N-systems design involves more than one individual and may or may not include an analysis of the task. Research on policy formation is conducted within this framework (e.g., Adelman, Stewart, & Hammond, 1975; Stewart & Gelberd, 1976). Finally, there are judgment situations in which the cues themselves may be judgments made at earlier stages of the judgment process either by the same or different judges. Hammond et al. (1975, p. 286) refer to such situations as “hierarchical judgment models”. Here, an outcome criterion is often unavailable and each stage is analyzed separately.13

In SJT research, judgment data is elicited over a series of trials and is analyzed at the level of the individual (Hammond et al., 1975). Hammond et al. (1975, p. 278) state that “the judgment data are analyzed in terms of multiple regression statistics.” Thus, correlational statistics and models such as multiple linear regression are used to describe and explain cognitive performance. However, as Brehmer (1979, p. 199) pointed out:

A common misunderstanding is that SJT holds that the judgment process itself operates according to the principles of multiple regression….just because they use these methods for investigating the judgment process….Instead, the methods are used to test a series of hypotheses about the nature of the judgment process, hypotheses about the nature of cue weights, function forms, combination rules, and predictability.

SJT is also committed to representative design defined in terms of formal situational sampling (Brehmer, 1979; Cooksey, 1996; Hammond et al., 1975; Hammond & Wascoe, 1980). Here, all of the relevant cues, cue values, cue distributions, inter-cue correlations and ecological validities of cues should be representative of those that exist in the natural version of the task. Hammond et al. (1975) recognized that under representative conditions, the presence of inter-cue correlations may make it difficult to ascertain the relative independent effects of each cue upon judgments. As an alternative to representative design, they recommended multi-method analyses where techniques such as predicting each cue from the others and successive omission of cues may be used.14 However, Dhami et al. (2004) have revealed that researchers typically only study single-system cases and do not use any form of representative design. Consequently, little is known about judgmental achievement for many tasks, and the lack of representative stimulus sampling threatens the validity of the findings reported.

Hammond et al. (1975, p. 304) stated that “the unique contribution of SJT has been to bring the theory, quantitative procedures, results of research, and technological innovations (externalization of judgment policies by means of interactive computer graphics) to bear on social policy formation outside the laboratory.” Over the past four decades since its launch, SJT has inspired hundreds of applied and basic studies of human judgment (for collections of such studies see Brehmer & Joyce, 1988; Dhami et al., 2004; Karelaia & Hogarth, 2008), as well as some more recent approaches to the psychology of human judgment and decision making (e.g., Gigerenzer et al., 1999; Juslin et al., 2000). One applied area of special note has been medical decision making (e.g., González-Vallejo, Sorum, Stewart, Chessare & Mumpower, 1998; Poses, Cebul, Wigton, et al., 1992; Tape, Kripal & Wigton, 1992; Way, Allen, Mumpower et al., 1998; Wigton, 1988; 1996). As Wigton (1996) observed, SJT is particularly well suited to the study of medical judgments because they characteristically involve decision making under uncertainty with inevitable error and an abundance of fallible cues. SJT has been useful in establishing variation among decision makers’ judgments and in their weighting of clinical information.

Later in his career, still faithful to Brunswik’s ideas but going beyond them, Hammond developed cognitive continuum theory (CCT, Hammond, 1996a, 2000a, 2001a; Hammond et al., 1987). The key propositions of CCT include the premises that cognition moves on an intuitive-analytical continuum; that quasi-rationality represents a commonly used and important middle-ground on this continuum; that cognitive tasks induce different modes of cognition; and that the upper level of cognitive performance is dictated by the match between properties of the task and mode of cognition.

More specifically, CCT states that there are modes of cognition that lie in-between intuition and analysis (see also Dhami, Belton & Goodman-Delahunty, 2015; Dhami & Thomson, 2012). Intuition (often also referred to as System 1, experiential, heuristic, and associative thinking) is generally considered to be an unconscious, implicit, automatic, holistic, fast process, with great capacity, requiring little cognitive effort. By contrast, analysis (often also referred to as System 2, rational, and rule-based thinking) is generally characterized as a conscious, explicit, controlled, deliberative, slow process that has limited capacity and is cognitively demanding. The modes of cognition that lie in-between are quasirational.

As Hammond (2010, p. 331) points out, the term ‘quasi’ does not mean that quasirational modes of cognition are the result of “improper cognitive activity”. In addition, Hammond (1996, pp. 166–167, brackets added) takes pains to differentiate quasirationaity from Herbert Simon’s (1957) concept of bounded rationality, which he states “means that cognitive activity has neither the time nor resources to explore […] completely the “problem space” of the task. The problem space that is explored, however, is explored in a rational or analytical fashion.” For Hammond, quasirationality is distinct from rationality. It comprises different combinations of intuition and analysis, and so may sometimes lie closer to the intuitive end of the cognitive continuum and at other times closer to the analytic end.

Brunswik (1943, 1952) pointed to the adaptive nature of perception (and cognition).15 For Hammond (1996a, 2000b), modes of cognition are determined by properties of the task (and/or expertise with the task). Task properties include, for example, the amount of information, its degree of redundancy, format, and order of presentation, as well as the decision maker’s familiarity with the task, opportunity for feedback, and extent of time pressure. The cognitive mode induced will depend on the number, nature and degree of task properties present. A task comprising either intermediate levels of, or a combination of, those properties inducing pure intuition or pure analysis will induce quasirationality. Depending on task properties, quasirationality may imply a combination where there is greater use of intuition than analysis, or vice versa. Movement along the cognitive continuum is characterized as oscillatory or alternating, thus allowing different forms of compromise between intuition and analysis (i.e., different forms of quasirationality).

According to Hammond, cognitive tasks can be differentiated from one another with regard to their properties as well as the mode of cognition they induce (see also Dhami & Thomson, 2012). Studies support the idea that different task properties induce different modes of cognition (Dunwoody, Haarbauer, Mahan, Marino & Chu-Chun, 2000; Hamm, 1988; Hammond et al., 1987; see also Mahan, 1994). Success on a task inhibits movement along the cognitive continuum (or change in cognitive mode) while failure stimulates it. Hamm (1988) additionally demonstrated that cognitive mode can shift during a task.

Brunswik (1943, 1952, 1956) stressed the significance of the correspondence or fit between properties of the task and the mode of cognition. Hammond (1988) predicted that judgment performance is contingent on the degree of correspondence between task properties and mode of cognition. The key implication is that pure analysis may be neither necessary nor sufficient for ceiling-level performance. Evidence suggests that task characteristics are important in determining the upper bound of performance (e.g., Seifert & Hadida, 2013; Rusou, Zakay & Usher, 2013) and that achievement is greater when the cognitive mode matches that induced by the task (e.g., Dunwoody et al., 2000; Hammond et al., 1987).

Although there is a growing body of evidence on the nature and performance of intuitive versus analytic cognition (e.g., Dunwoody et al., 2000; Hammond et al., 1987; Mahan, 1994; Marewski & Mehlhorn, 2011), there is a distinct dearth of research on the operation and outcomes of quasirationality, even though, as some have argued, this is the most common mode of cognition in many consequential tasks (Dhami et al., 2015; Dhami & Thomson, 2012). In their efforts to identify the processes involved in intuitive versus analytic cognition, Glöckner and his colleagues have found some similarities and differences between these two modes of cognition (e.g., Glöckner & Betsch, 2008a; 2008b; 2012; Jekel, Glöckner, Fiedler & Bröder, 2012; Horstmann, Ahlgrimm, & Glöckner, 2009). Their findings suggest that intuition and analysis may operate in an integrative fashion and thus potentially shed light on different forms of quasirationality. For instance, quasirationality may allow individuals to use a lot of information quickly. Other work measuring the performance of different modes of cognition, for example by Blattberg and Hoch (1990), has demonstrated that a quasirational model which combined managerial intuition (expertise) and statistical analysis repeatedly outperformed purely intuitive and statistical models in five forecasting tasks (see also Ganzach, Kluger & Klayman, 2000).

Hammond (2007) returned to the themes of analysis and intuition and the cognitive continuum in his last book entitled Beyond Rationality: The Search for Wisdom in a Troubled Time, published at age 92. At the heart of his argument is the proposition that the key to wisdom lies in being able to match modes of cognition to properties of the task. In brief, for most judgment and decision making tasks, quasirationality is what is required for wisdom. According to Hammond (2007, p. 237), “…the tactics that most of us use most of the time are neither fully intuitive nor fully analytical: they are a compromise that contains some of each; how much of each depends on the nature of the task and on the knowledge the person making the judgment brings to the task.”

Finally, it is notable that Hammond was as comfortable conducting basic research in the laboratory as he was in solving applied problems, especially in the policy context. In fact, an emphasis on application to social policy formation was part of the original declaration of SJT (Hammond et al., 1975). A number of SJT inspired policy applications were undertaken on topics ranging from citizen participation in community planning, through water resource planning, to air pollution management, and so forth (e.g., Brady & Rappoport, 1973; Flack & Summers, 1971; Hammond, Mumpower & Smith, 1977; Mosier, Skitka, Heers & Burdick, 1998; Mumpower, Veirs & Hammond, 1979; Stewart & Gelberd, 1972). Perhaps the best known and most influential of these applications is Hammond and Adelman’s (1976) ‘Denver Bullet Study.’

The policy issue in the Denver Bullet Study concerned the type of bullet that should be used by the Denver (Colorado, USA) City Police. In 1974, the Denver Police Department (DPD) decided to change its handgun ammunition because it was argued that conventional round-nosed bullets provided insufficient ‘stopping effectiveness’ (i.e., the ability to incapacitate and thus to prevent the person shot from firing back at the police or others). The DPD chief recommended using a hollow-point bullet, claiming that such bullets flattened on impact, thus decreasing penetration, increasing stopping effectiveness, and decreasing ricochet potential. This claim was challenged by, for example, the American Civil Liberties Union and minority groups. Opponents stated that the new bullets were in fact outlawed ‘dum-dum’ bullets which were more injurious than the round-nosed bullet and so should be barred from use. There were public hearings, debates and disputes, and appeals by both sides to ballistics experts for scientific information and support. Disputants focused on evaluating the merits of specific alternative bullets—confounding the physical effect of the bullets with social policy implications. As Hammond and Adelman (1976) realized, they confounded questions of value — what the bullet should accomplish with questions of fact — concerning ballistic characteristics of specific bullets. Arguments favored one option or another, but obscured the basis for a preference.

Hammond and Adelman (1976) stated that policy makers inadvertently had adopted the role of (unqualified) ballistics experts, and ballistics experts inadvertently had adopted the role of (poor) policy makers. Hammond and Adelman intervened to first discover the important policy dimensions from the policy makers’ viewpoint and then elicited ballistics experts’ ratings of the bullets on these dimensions. The relevant dimensions were stopping effectiveness, probability of serious injury, and probability of harm to bystanders. The experts’ ratings of the bullets on the last two dimensions were almost perfectly confounded. The probability of serious harm to bystanders is highly related to the penetration of the bullet, whereas the probability of the bullet effectively stopping someone from returning fire is highly related to the width of the entry wound. Giving equal weights to these dimensions, and combining these weights with the experts’ technical judgments, led Hammond and Adelman (1976, p. 395) to identify a bullet that “has greater stopping effectiveness and is less apt to cause injury (and is less apt to threaten bystanders) than the standard bullet then in use by the DPD.” The bullet they recommended was accepted by both the DPD and the Denver City Council and put into operation.

According to Adelman (1988, p. 443), the above study illustrates the significant contribution of SJT in identifying the importance of the “separation of facts and values” in resolving social policy disputes. Adelman identifies five key points to be derived from the Denver Bullet Study and similar applications. First, social polices comprise three types of judgments: (a) value judgments about what ought to be, (b) factual judgments about what is or will be, and (c) evaluative judgments that integrate value and factual judgments into a final policy decision. Second, policy makers should be responsible for value judgments and technical experts for factual judgments. Third, methods exist to build quantitative models for both value and factual judgments. Fourth, analytical methods can and should be used to combine value and factual judgments so that alternatives can be systematically evaluated. Fifth, cognitive feedback can be used to make the implications of value judgments and factual judgments explicit.

In 1996, Hammond published a book entitled Human Judgment and Social Policy: Irreducible Uncertainty, Inevitable Error, Unavoidable Injustice which attempted to understand the policy formation process.16 He included Brunswikian and SJT research as well as other work, notably, Ward Edwards’ (1954, 1961) contributions using Bayesian-themed behavioral decision theory. This 1996 book emphasized two key themes that Hammond had been considering for a number of years, but which he was able to more fully address here. The first theme was the distinction between theories of truth that emphasized coherence competence and theories of truth that emphasized correspondence competence. The former is exemplified in Ward Edward’s Bayesian approach (and later in Kahneman and Tversky’s ‘heuristics and biases’ approach, e.g., Kahneman, Slovic & Tversky, 1982) which accentuate normative rationality defined in terms of internal and logical consistency. The latter is exemplified by the Brunswikian and SJT approaches in which empirical accuracy defines achievement. The issue, according to Hammond, was whether in a policy context, it was more important to be rational (internally and logically consistent) or to be empirically accurate. Hammond’s treatment of the strengths and limitations of the two approaches is too nuanced and sophisticated to review fully here, but his conclusions are guardedly optimistic that in the realm of policy we can strike a balance between coherence and correspondence. The key to achieving this balance lies in how we think about error, which was the second theme.

Hammond (1996a) emphasized the duality of error. He noted that, although this concept had been recognized long before, it was not until Neyman and Pearson (1933) that duality of error (Type I and Type II error) was formally introduced with a mathematical treatment. The concern with error was also an important part of the Brunswikian legacy. Brunswik (1956) demonstrated that the error distributions for intuitive and analytical processes were quite different. Intuitive processes led to distributions in which there were few precisely correct responses but also few large errors, whereas with analysis there were often many precisely correct responses but occasional large errors. According to Hammond, duality of error inevitably occurs whenever decisions must be made in the face of irreducible uncertainty, or uncertainty that cannot be reduced at the moment action is required. Thus, there are two potential mistakes that may arise — false positives (Type I errors) and false negatives (Type II errors) — whenever policy decisions involve dichotomous choices, such as whether to admit or reject college applications, claims for welfare benefits, and so on. Hammond (1996a) argued that any policy problem involving irreducible uncertainty has the potential for dual error, and consequently unavoidable injustice in which mistakes are made that favor one group over another.

In this work, Hammond made a remarkable and influential advance to the Brunswikian and SJT approaches, pushing those ideas all the way from perception through thinking to policy formation. He identified two tools of particular value for analyzing policy making in the face of irreducible environmental uncertainty and duality of error. These were Signal Detection Theory (SDT; Tanner & Swets, 1954; Swets, 1992) and the Taylor-Russell (1939) paradigm. Others have extended these ideas in a number of substantive policy areas such as college admissions (Mumpower, Nath & Stewart, 2002), child welfare services (Mumpower, 2010; Mumpower & McClelland, 2014), international policy (Dunwoody & Hammond, 2006), and mammography (Stewart & Mumpower, 2004). In fact, Hammond’s 1996 book, published almost 50 years after his first academic paper, is now his most cited work.